The TechEd North America 2012 conference was so chock full of presentations, workshops, labs and informational sessions that many developers who attended probably couldn't cram into their schedule everything in which they were interested.

And if you couldn't attend, you might be feeling some information envy, afraid that you're falling behind the curve in learning the latest and greatest.

But fear not. Microsoft offered a great service by recording the sessions and putting them up online 48 hours later.

In fact, even though I couldn't attend in person, I signed into the TechEd site with my Windows Live ID and identified sessions I wanted to check out to develop my own little "virtual" schedule. (You can also quickly sign up for a TechEd account only). I checked off the events in which I was interested and they were added to "myTechEd Portal," from which I could download slides and watch videos.

For example, I just had to visit my portal, click on "My Content" and I was presented with the list of my chosen events, complete with links to the videos and slides. Being the Data Driver, of course, I was mostly interested in database-related presentations, so I watched "The 12 Reasons to Love Microsoft SQL Server 2012," "Business Intelligence and Data Visualization: Microsoft SQL Server 2012" and others. Not all sessions are available online, though. For example, I couldn't watch "The New World of Data: SQL Server and Hybrid IT," but I could download the PowerPoint slides.

Even though the event was held last week, I can still add sessions to my schedule and have them available for reference in one place. And the best part is, you can, too. One way to do that is to go to the site, click on the top-level Content menu item and then the Catalog item. That brings up a list of everything, categorized by Track, Speaker, Product/Technology and more. You can still check the box next to "Database & Business Intelligence" and get a list of 92 sessions. Each and every session is listed, with icons to indicate availability of videos and slides.

You also can see the sessions for Microsoft SQL Azure and Microsoft SQL Server under the Product/Technology filter. The former, for example, lists seven sessions, and every one has an accompanying video.

Don't forget that you can also find many (maybe all?) of these sessions on the Channel9 site. I actually prefer that because you're offered the choice of viewing the videos online or downloading them in a variety of formats and quality levels (and even audio-only) for viewing on different devices, such as your desktop or (gasp!) even an iPad (yes, that's listed on the site under the "High Quality MP4" option).

I'd write more, but I just found "Migrating SQL Server database applications to Windows Azure Virtual Machine" on Channel9, by Guy Bowerman and Evgeny Krivosheev, length 1 hour, 2 minutes, 29 seconds.

Happy viewing (hopefully soon on a Surface, not an iPad!).

What was your favorite database development video from TechEd? Share your suggestions here or drop me a line.

Posted by David Ramel on 06/26/20120 comments

I've been playing around with the new SQL Server Data Tools, and although many have complained about missing features compared to the "Data Dude" Visual Studio projects and other tools that SSDT somewhat replaces, I'm impressed by the way Microsoft has gotten the product out there for review and is improving it with functionality requested by those same users.

SSDT, if you're not familiar with it, is the hodgepodge of improvements to database development in Visual Studio that was formerly known by the codename "Juneau." It comes free with the new SQL Server 2012 and just-released Visual Studio 2012 Release Candidate and can be installed from the Web. Even describing SSDT is somewhat complicated, as it consists of so many different "things." For example, besides replacing and improving the Visual Studio for Database Professionals ("Data Dude") product, it's also the new place to find former Visual Studio Business Intelligence Design Studio (BIDS) functionality such as Analysis Services, Reporting Services and Integration Services. It's also kind of a Visual Studio in-house replacement for SQL Server Management Studio so you don't have to keep switching back and forth between the two. It also adds a bunch of new features and improved functionality across many areas. Basically it enhances and simplifies the (admitted by Microsoft) difficult database development experience in Visual Studio.

I'll explore some of the individual SSDT components later, but here I'll discuss some of the features that didn't make it into the initial SSDT release and what Microsoft is doing about it. For whatever reason, SSDT shipped somewhat incomplete with the release of SQL Server 2012 in April. For example, commenting on a SSDT beta release, a user in May of last year offered up the following list of missing features as compared to VS2010 Database Projects.

- Schema View

- Dependency Viewer

- Data Compare

- Database Unit Testing

- Data Generation

- Data Compare

- Extensibility in General

"Is this the state in which it will ship?" the user asked. "Will VS2010 continue to evolve?"

Microsoft's Jeff Welton replied:

"A very thorough list for the CTP gap compared to TSData, though I'd also hasten to add the number of features that Juneau currently provides that TSData does not, such as Power Buffer, Server Explorer integration for declarative work, Find All References/Goto def, and Table Designer.

In the coming weeks and months we'll have more announcements over our offerings, projected timeframes, and plans for the future. All of the features you mention are on our minds."

In further discussion about the first item on the "missing" list, Schema View, Welton said: "I don't have a definitive answer to provide you on the Schema View at this point in time; however, we do recognize the need. ..."

And he wasn't kidding. Notice this announcement from a couple months ago -- one day after the release of SQL Server 2012 -- from Microsoft's Janet Yeilding:

"I am pleased to announce the first release of SSDT Power Tools. The SSDT team will be leveraging Power Tools as a mechanism to deliver new functionality and experiences through frequent updates, of which this is the first example. In this first iteration, we focused on addressing the feedback that SSDT does not offer the equivalent of the Schema View tool window found in the Visual Studio 2010 Database Project. This release of the SSDT Power Tools will add the Schema View capabilities back by extending the SQL Server Object Explorer, providing a logical view over the schema hosted inside your projects."

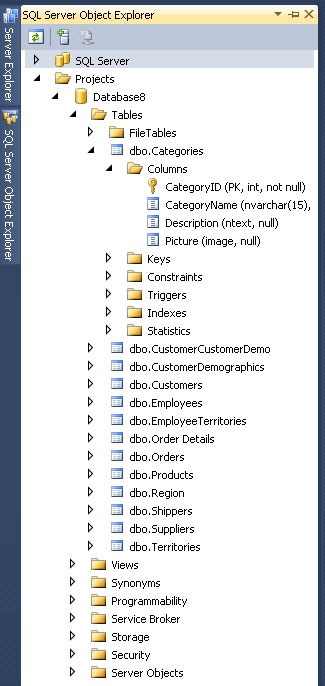

Installing the Power Tools resulted in a new "Projects" node in SSDT's new SQL Server Object Explorer, or SSOX, (with SSMS-like functionality), where you can see the schema of your database and add, edit or refactor the various objects. Fig. 1 shows what it looks like in Visual Studio 2010. (This also worked in the Visual Studio 11 Beta, but I couldn't immediately get it to work with the Release Candidate, and I have a question in to Microsoft about that.)

|

|

Figure 1. The new Schema View. |

With the Schema View, you can drill down into the database structure -- or rather a model of the database structure -- and add or edit objects. For example, you can change the name of a column and get a preview of the script to make the change. After executing that, you can hit F5 to deploy the project and see the change reflected in the actual database, displayed in the SSOX under the SQL Server node (above the Projects node in Fig. 1).

You ask for Schema View, you get Schema View. Now, how's that for service?

And there's surely more to come. Gert Drapers, the original "Data Dude" and data platform guru at Microsoft, chimed in, "The Data Comparison, Database Unit Testing and Data Generation functionality will be added to SSDT post Visual Studio 11 RTM + 3 months." Seeing how the (now renamed) Visual Studio 2012 Release Candidate just went live, we can look forward to trying these out fairly soon.

Another user provided his own must-have wish list for SSDT on The Code Project:

- Resolve references to other production and system databases like MSDB

- Resolve references to other production databases connected by a linked server

- Support of migration scripts to enable data preservation and to add static data

- Fast and reliable build of deployment scripts

- Possibility of executing and deploying a single file

- Detection of isolated changes in the target database

- Responsive SSDT'S GUI as compared to SQL Server Management Studio

- Comfortable T-SQL writing

- Extendable usage of snippets

He wrote about his thorough evaluation of SSDT and concluded that "There was no feature in my list not supported in SSDT, but some features still have room for improvement."

But SSDT won't get all of the functionality enjoyed by database developers who are used to using previous tools. For example, one user in an SSDT forum requested "a Query tool like the one we have in the old Server Explorer 'New Query' option." But there will be no graphical query designer. Microsoft's Janet Yielding replied: "A query designer or robust query editor is not in our future plans for SSDT.... As a workaround, I'd recommend using SSMS for your query authoring." Note, however, that a query designer, database diagrams and reference data support had been reported to be "on the horizon" in presentations such as at the TechEd conference about a year before Yeilding's post (see the PowerPoint slides).

Also, integration with Entity Framework, which actually worked in the Visual Studio 11 Developer Preview, was removed in the Visual Studio 11 Beta, as the SSDT blog explained in March. A post said: "Based on the feedback received and the remaining engineering work that would have been required to complete the experience, the decision was made to not include this functionality in Visual Studio 11."

And with last week's release of the Visual Studio 2012 Release Candidate, be warned that there can be some installation issues if you have SSDT installed.

For a summation of missing/included SSDT features, the team blog last fall provided a (now slightly outdated) comparison of SSDT CTP4 and VS2010 Database Projects features.

You can also find other SSDT feature requests on the SSDT forum (search for "feature request").

What features would you like to see in SSDT? Share your thoughts on the new database development experience in Visual Studio by commenting here or dropping me a line.

Posted by David Ramel on 06/05/20121 comments

In the midst of my playing around with the new SQL Server Data Tools (SSDT), the Visual Studio 2012 Release Candidate came out. So I thought I'd upgrade to the RC. Silly me. One of these days, I'm going to learn to do a little advance research before blindly plunging into these kinds of projects.

I spent hours repairing, removing and re-installing various packages until I seemingly got it to work correctly (except for the SSDT Power Tools' Schema View functionality). Unfortunately, I didn't take notes because I didn't think it was going to be that much of a problem, but it turns out a lot of other people have experienced the exact same issues. A reader on the Visual Studio blog announcing the RC summed it up nicely: "Avoid this for now if you have SSDT installed, you are greeted with a lot of errors both in VS 2012 AND VS 2010 after upgrading."

I wouldn't say you should avoid the upgrade, though. They do work together. More information on the problems experienced and how some users got the two products to play nicely together can be found on this forum thread. Definitely read it before you install the RC with SSDT.

Also, note the following from the "Visual Studio 2012 RC Compatibility" page in the MSDN Library about compatibility with Visual Studio 2010 Database (.dbproj) projects:

If you convert the project to a SQL Server Data Tools Database project, you can open it in Visual Studio 2012 RC. However, Visual Studio 2012 RC doesn't support the following artifacts:

- unit tests

- data-generation plans

- data-comparison files

- custom rule extensions for static code analysis

- server.sqlsettings

- .sqlcmd files

- custom deployment extensions

- partial projects (.files)

If you install SQL Server Data Tools, you can open the project in Visual Studio 2010 with SP1 after the migration. For more information, see Microsoft SQL Server Data Tools.

It's a different story for Visual Studio 2008 database projects, though, as noted in the RC Read Me file:

1.3.5 Database Development

1.3.5.1 Upgrade of Visual Studio 2008 database projects to SSDT is not supported

When a Visual Studio 2008 database project is opened in Visual Studio 2012 RC, the user is prompted to upgrade. Completion of the upgrade steps appears to succeed, but the project still cannot be loaded because an upgrade to SQL Server Data Tools (SSDT) is not supported.

To resolve this issue:

There is no workaround.

I'm not slamming Microsoft here at all. These issues happen all the time with pre-release products. Considering the complexity of the software involved and the myriad possible configurations and setups of user systems, I'm frankly amazed that they work as well as they do. I could have saved myself a lot of time and effort by slowing down, getting organized, doing advanced research and taking notes while I proceed, preferably on a virtual machine in case something goes wrong. Lesson learned.

Yeah, right.

What do you think about the RC? Why are so many developers so upset about the use of ALL CAP menus? Comment here or drop me a line.

UPDATE: Janet Yeilding on Thursday posted a blog item explaining the issue: symptoms, cause and workaround. The workaround, taken directly from her post:

To fix your installation, find Microsoft SQL Server 2012 Data-Tier App Framework in Programs and Features and Repair it. You may have multiple versions of DacFx, so make sure to repair version 11.0.2100.60 (note that on x64 machines, there will be two entries for 11.0.21.00.60 and both should be repaired). After this step, SSDT should load successfully in all Visual Studio shells.

-- DR

Posted by David Ramel on 06/05/20121 comments

Here's a new one: The maker of a new high-performance database claims it especially lends itself to development using the popular Model-View-ViewModel (MVVM) programming pattern.

The in-memory database comes from Starcounter, a Swedish company that last week announced what it calls the world's "fastest consistent database" thanks to patent-pending technology (VMDBMS) that melds an application virtual machine with a database management system. Starcounter said it avoids fragmentation and keeps data in one place in RAM -- rather than copying it back and forth from disk to RAM and from the database to the application like other systems. This allows the database to reportedly attain speeds 10 times faster than other high-performance systems and 100 times faster than more traditional RDBMSs.

While that's all relatively typical feeds-and-speeds stuff, I thought it was interesting that company founder Joachim Wester told me the new product offers special advantages to database developers because it lends itself to the MVVM pattern. That pattern, of course, is currently all the rage in the .NET Framework development world, allowing separation of business and logic concerns, enabling better unit testing and letting everybody know that you use the latest and greatest programming fad.

"Having your business objects as your database makes popular .NET patterns such as MVVM (Knockout or Angular) very natural," Ester said in an e-mail interview. He expounded:

"Starcounter can host the ViewModel on the server. When using popular frameworks such as KnockoutJS, you can program all your logic in C#. You manipulate the ViewModel on the server. Starcounter transparently syncs it with the browser. As most ViewModels derive their data from the database, the server can inexpensively keep each browser's ViewModel live on the server … the data was in RAM to begin with anyway. And as the server controls the ViewModel, you get security without an effort. It simply does not matter if you manipulate the client code. You can leave out the JavaScript programming and code simple local type-checked C#. Our favorite MVVM stack is Starcounter combined with Angular, a Knockout alternative from Google."

Sounds pretty cool, except for that Google thing. What about .NET? "Starcounter hooks into the .NET [Framework] itself" Ester said. "It is not a framework. It lives underneath .NET. So if you know how to program in .NET, you already know how to use Starcounter."

Wester also pointed out other benefits for database developers using Starcounter, primarily more simplicity, or, as he put it: "no separate database schema, no glue code, no O/R mapping." And with this simplicity reducing the lines of code a programmer has to write, the overall development effort is simplified and bug-tracking efforts are reduced, he said.

See the company's Web site for more information on the new database, currently available as a free beta download to a limited number of customers.

What do you think? Is Starcounter on to something? Will this new database simplify your development? Comment here or drop me a line.

Posted by David Ramel on 05/21/20120 comments

Microsoft recently updated the Open Data Protocol (OData) and WCF Data Services framework and just last week provided some demo services so data developers can try out the new features.

The WCF Data Services 5.0 release offers libraries for .NET 4 and Silverlight 4 and a slew of new client and server features, including support for actions, vocabularies, geospatial data, serialization/deserialization of OData payloads, "any" and "all" functions in queries and more (including a new JSON format).

OData, now at version 3, is the Web protocol for querying data using HTTP, Atom Publishing Protocol (AtomPub) and JSON, released by Microsoft under the Open Specification Promise so third-party and open-source projects can build clients and services for it. Documentation for V3 is now available.

The three new V3 demo services include simple read-only and read-write models for Products, Categories and Suppliers, and a read-only service that exposes the trusty Northwind database.

The new support for actions looks promising, providing for example, a Discount action for Products that takes a discountPercentage integer as a parameter and decreases the price of the product by that percentage, as shown on the demo services page.

But I decided to quickly try out something a little simpler just as a proof of concept: the new "any" and "all" operators. They allow tacking onto URLs filters such as this example shown on the demo services page:

http://services.odata.org/V3/OData/OData.svc/Categories?$filter=Products/any(p: p/Rating ge 4)

As WCF Data Services supports LINQ, I experimented with the "any" and "all" operators in a LINQ query via a Visual Studio project, using the MSDN Library Quickstart here.

I changed this query:

var ordersQuery = from o in context.Orders.Expand("Order_Details")

where o.Customer.CustomerID == customerId

select o;

to this query (note the use of the "All" operator):

var ordersQuery = context.Orders.Expand("Order_Details")

.Where(c =>

c.Order_Details.All (p =>

p.Quantity > 50));

Sure enough, this query didn't work with the old Northwind service, but it worked after simply inserting "V3" into the service URL so it looks like:

http://services.odata.org/V3/Northwind/Northwind.svc/

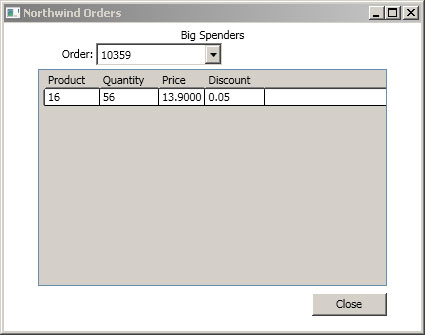

Fig. 1 shows the result of my efforts, in a WPF application showing customer orders with a quantity of more than 50.

|

Figure 1. A WPF app successfully pulls Northwind orders via a LINQ query using the new "Any" operator. (Click image to view larger version.) |

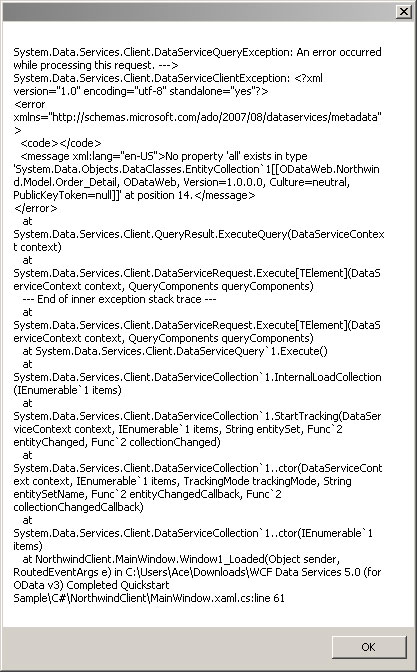

Without the "V3" in the service URL, though, you get an error message (see Fig. 2).

|

Figure 2. Not using the new V3 OData service results in an error. (Click image to view larger version.) |

I recommend the Quickstart as an easy way to experiment with the new OData features, but you have to plug them in yourself because it doesn't use them, though it does require WCF Data Services 5. The completed project files are available if you don't want to go through the whole process of creating each project and just plug new feature functionality into them, as I did.

More improvements may be coming soon, as WCF Data Services, as the team is now using "semantic versioning" and NuGet, as have other products, such as Entity Framework. One reader asked about support for "Join," while Microsoft's Glenn Gailey has a list of improvements he'd like to see, including support for enums, client JSON support, functions and more (note that this wish list is included in a post of his favorite things that did make it into the new versions).

Share your thoughts on the new versions of OData and WCF Data Services. Comment here or drop me a line.

Posted by David Ramel on 05/14/20120 comments

Well, I'm still astonished that I risk trashing my entire system if I try out some evaluation software, as I was told by readers a while back after a nightmarish experience trying to remove the SQL Server 2008 R2 beta and install the free Express version.

Some of the reader comments roasted me for being such a fool, along the lines of this from "Paul":

"By its very nature eval software is not to be installed on any machine you don't care about needing to be rebuilt from the ground up. Hasn't anybody ever read the warnings included with installation of eval software? If you are 'experimenting' with new software on a machine that cannot be wiped and rebuilt then the onus is on YOU not Microsoft."

That just doesn't seem right to me (and a lot of other people on the Net -- so there, "Paul").

Anyway, since losing a good chunk of my life recovering from that fiasco, I've been reluctant to try out some of the exciting new stuff that's come out in the database development world, as I only have one PC to work on and I need it to keep working.

But I enjoy checking out these new products and sharing my and others' experiences so we can all learn new things. And with the new SQL Server beta and other new evaluation software out, I'm dying to try them out. So I'm dusting off an old desktop to make it my dev machine.

It's almost 10 years old, but it has a fast processor, 4GB of RAM and a couple of decent hard disks (I used to use it a lot for video editing and related graphics stuff). Single core, but with the old "HyperThreading" technology to kind of emulate multiple cores. So far, so good. I've installed Windows 7, Visual Studio 2010, the Visual Studio 11 beta and Express beta, SQL Server 2012 beta and some related packages. The only problem I've had in these early stages is that my old graphics card doesn't support DirectX10 so I can't run the Windows Phone SDK 7.1 phone emulator, but I'm looking to soon buy a cheap card that fits the minimum bill (gotta love Craigslist).

As this is my first dev machine, I've looked around the Web for advice. I found Scott Hanselman's "2011 Ultimate Developer and Power Users Tool List for Windows" useful, but he must have 500 items listed there. I don't have time to even read through the whole list, much less download and install the recommended products. What, does he have a 5TB RAID or something to store this stuff on?

Also, I've spent more than a good long day downloading and installing this software, so I feel kind of leery spending a bunch more time to flesh out a system that I might have to rebuild from the ground up just because I try out some evaluation software. I only want to install the best, nearly essential stuff.

So I'm looking to you, dear database developer reader, for advice from the learned -- those who have traveled here before me. What (free) tools, packages, products are most essential to help me explore new database development products? What do you personally absolutely NEED to have on hand as you try out Visual Studio, SQL Server and other evaluation software? Also, what tips do you have on configuration or system optimization?

Please comment here or drop me a line. And, oh, "Paul," I really don't need to hear from you again, thanks.

Posted by David Ramel on 05/01/20124 comments

Having recently set up an old desktop as a dev machine in order to avoid trashing my main computer with evaluation software (don't get me started), I decided it was time to try out Visual Studio 11 Beta and its new features that promise to ease database development.

SQL Server Data Tools, released with SQL Server 2012 and coming with Visual Studio 11 Beta, are interesting and certainly worth a look soon, but I decided to first check out something a little simpler that also purportedly makes life easier for database developers in the IDE: LocalDB.

It's a new version of SQL Server Express with easy installation and use with no onerous configuration or management required by developers who just want to write code and create applications. While meant for development/testing, Microsoft said it can be used as a production embedded database, also.

You get it as an option when you download the free SQL Server 2012 Express.

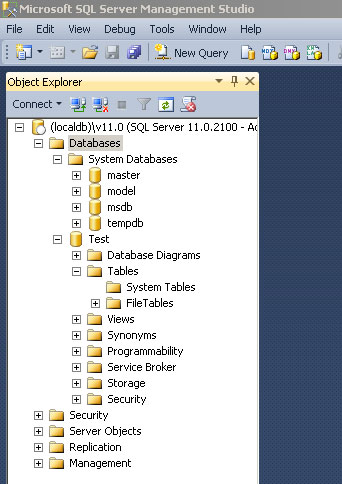

Download and installation went fairly smoothly, so I started to tinker. Using LocalDB in SQL Server Management Studio (SSMS) was as simple as typing "(localdb)\v11.0" into the Connect to Server dialog box. After connecting, you can see you're using LocalDB in the Object Explorer, as shown in Fig 1.

|

Figure 1. Connecting to LocalDB in SQL Server Management Studio. (Click image to view larger version.) |

You can do the same thing in Visual Studio 11 Beta from the new SQL Server Object Explorer -- described by Microsoft as designed to reflect the look, feel and functionality of SSMS -- by right-clicking the SQL Server node and choosing Add SQL Server, which brings up the same connection dialog box.

I decided to check out some MSDN Library instructional pages to see how to use LocalDB, keeping in mind that it's preview-only, subject-to-change documentation. The MSDN Library "Creating a SQL Server Express LocalDB Database" walkthrough seemed like a good place to start.

I completed the walkthrough successfully without major problems. It involves creating a Windows Forms project, adding a database and then creating tables for it. Be aware that in discussion of the Table Designer, the walkthrough has a note explaining that a new Table Designer is used in the SQL Server 2012 Release Candidate 0, while the walkthrough uses the "old version of Table Designer." The note contains a link to directions about how to use the new Table Designer, in the MSDN Library SQL Server Data Tools section. So, because the steps are similar but the table names and fields and other details aren't the same as in the walkthrough, I just had to go back and forth between pages to complete the tutorial.

The Table Designer is pretty cool, letting you create tables either in a graphical Columns Grid where you enter row information via the GUI fields for name, data type, nulls allowed and so on, or in a Script Pane where you write out the actual code, such as "CREATE TABLE [dbo].[Customers]." When using the latter, you can just click an Update button at the top of the designer to generate a script to update the database to reflect the changes in the graphical Columns Grid.

After creating the tables, constraints and foreign keys and clicking Update one last time, I populated the sample tables with data from the venerable Northwind database. This involved creating a connection to the Northwind database, showing table data for Customers and Products and simply copying and pasting the table data into the appropriate SampleDatabase.mdf tables I had created. Note, however, to get some older versions of the Northwind (or any) database to work with LocalDB, you need to upgrade it to work with SQL Server 2012. This is as simple as attaching to the database in SQL Server 2008 Management Studio and then detaching it.

I ended the walkthrough with a SampleDatabase.mdf database file that I used in a companion walkthrough, "Connecting to Data in a SQL Server Express LocalDB Database (Windows Forms)."

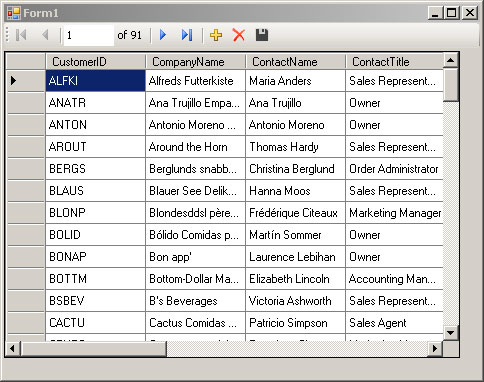

This walkthrough was easy. It involved connecting to the SampleDatabase.mdf database I just created via a Data Source Configuration Wizard. The wizard produced a SampleDatabaseDataSet that was added to my project, appearing in the Data Sources window. Then it was simply a matter of adding a control to the Form1 form by dragging a table from the dataset onto the form. That produced a DataGridView on the form that lets you see and edit table data upon running the application. Fig. 2 shows the app running.

|

Figure 2. The venerable Northwind database displayed in a Visual Studio Windows Forms app using LocalDB. (Click image to view larger version.) |

I didn't follow the Next Step of adding validation functionality. I did, however, investigate some more MSDN Library instructional material in pages linked to from the walkthroughs.

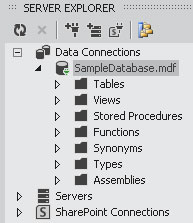

I found in the MSDN Library | Visual Studio 11 Beta | Visual Database Tools section a lot of pages about the Database Diagram Designer. It's supposedly opened up via a Database Diagrams node under the database connection in Server Explorer. I couldn't get this to work.

Figs. 3 through 5 illustrate my experience with Database Diagrams in a few different configurations of Visual Studio and SQL Server. From left to right: Visual Studio 10 running with SQL Server 2008 on my regular work machine, where the Database Diagrams node shows up under a database in Server Explorer and they work; Visual Studio 10 running with SQL Server 2012 on my dev machine, where Database Diagrams show up, but don't work (error message); and Visual Studio 11 Beta on my dev machine, running with SQL Server 2012, where the Database Diagrams don't even show up, but every other node is the same. The aforementioned error message in VS10 said: "This server version is not supported. Only servers up to Microsoft SQL Server 2008 are supported."

|

Figure 3. Contrary to some MSDN Library preview documentation, there's no "Database Diagrams" node under Data Connections in Visual Studio 11 Beta ... |

|

Figure 4. ...while it shows up and works in VS10 with SQL Server 2008 ... |

|

Figure 5. ... and shows up but doesn't work in VS10 with SQL Server 2012. |

I looked around and found a "bug" titled "Missing Database Diagram Node in Server Explorer" filed by a user who encountered the same issue. Microsoft replied, "This is a by design change in the product. Diagrams are no longer supported in the new version of the SQL database, so the node is removed when you work with a new SQL server." Well, that settled that question. The user who filed the "bug" reported Microsoft's response in a forum and said, "It appears that they intend for us to use SSMS to design databases from now on." I'm kind of curious about that, considering that one of the stated goals to improve database development in Visual Studio was to incorporate more functionality in the IDE so users don't have to keep switching back and forth between it and SSMS.

Anyway, just beware of the "Database Diagrams" information. I'm not complaining, because as noted earlier, this is clearly marked as preview documentation, subject to change. I'm just hoping this might clear things up for someone who follows my same path and looks for help on the Web.

There's much more to explore, but I've spent enough time on LocalDB and need to move on to further explore Data Tools and other things that enhance database development in the latest Visual Studio. Stay tuned.

What are your database development experiences in Visual Studio 11 Beta? We'd love for you to share. Please comment here or drop me a line.

Posted by David Ramel on 05/01/20121 comments

A recent salary survey indicated that database-related programmers had excellent job security, and now there's more good news about job prospects in the exploding mobile arena and SQL Server usage in general.

First is a report that SQL Server developers are in high demand in the mobile marketplace. SQL Server skills are No. 4 on the list of most-coveted mobile expertise, according to WANTED Technologies Corp., which provides recruiting statistics based on help wanted ads and noted in the report that "Hiring demand for tech talent with mobile skill sets has grown drastically over the past years." This report was based on job ads placed in the month of February.

Java and Android come in first and second, of course, in this Google- and Apple-dominated mobile world, followed by JavaScript. No surprises there. But seeing SQL Server coming in at No. 4 was a surprise to me, beating technologies such as HTML, CSS, Linux and AJAX.

Next up is news that SQL Server is maintaining its popularity as a programming language. This comes from O'Reilly Radar, which has a new report on the popularity of various programming languages based on book sales. In analysis that indicated a popularity fall-off for languages such as C# and ".net Languages" (that's funny, I thought C# was a .NET language, but what do I know?) "SQL" actually improved slightly. True, it was pretty far down on the list of "Large" programming languages in terms of total sales numbers, but a slight improvement from 2010 to 2011 speaks well for you data drivers. "The fact that SQL is holding steady indicates that, despite all the NoSQL talk, there's plenty of interest in traditional SQL skills," noted an article on ReadWriteWeb.com.

Of course, SQL Server developers should probably bone up on their security skills in the wake of yet another report listing SQL injection as the "#1 database security concern." This comes in a press release from GreenSQL, which surveyed some 6,000 GreenSQL SMB users.

But take heart, you can improve those SQL Server security skills for free at the Microsoft Virtual Academy, which is offering "Mission Critical Confidence using Microsoft SQL Server 2012."

First up on the list of modules? "Microsoft SQL Server 2012 Security enhancements."

So there you go. Data Driver is a one-stop shop, informing you that SQL Server is a pretty good technology to know, and SQL injection is still a big problem, but the latest SQL Server edition addresses the problem and Microsoft will teach you how to do just that for free.

You're welcome.

How are you feeling job-wise if you're a database developer? Comment here or drop me a line.

Posted by David Ramel on 04/11/20123 comments

I've heard a lot about the new LightSwitch in Visual Studio 11 Beta and how it simplifies data-centric application development, so I thought I'd give it a try.

For those not familiar with LightSwitch, Eric Nelson wrote in an MSDN blog: "LightSwitch is targeted at business developers and power users creating custom LOB applications leveraging data from multiple sources that can be easily deployed to the desktop or cloud."

The new LightSwitch "has embraced OData," Microsoft said last month, so I thought I'd try out the new support for OData, which includes the ability to consume OData in your applications as well as produce and deploy your very own OData services for use by other applications.

Earlier this week, Beth Massi http://blogs.msdn.com/b/bethmassi/archive/2012/03/27/lightswitch-in-visual-studio-11-beta-resources.aspx compiled some useful LightSwitch information, including some of her own articles about producing and consuming OData. Massi pointed out the "goodies" in the new release include a new "cosmopolitan shell and theme," extensibility toolkit, documentation and a beta forum where users can ask questions.

A while back I wrote about how easy it was to cook up a LightSwitch application in less than an hour, from downloading the program to producing a query-based "screen" that let me add, edit and delete records from an a SQL Azure database, so I thought I'd revisit that scenario, too.

I also wanted to hook up to the Windows Azure Marketplace DataMarket (one of my favorite Microsoft product names -- quick, say it three times fast without spitting all over yourself!), which I've also written about before in the context of an ASP.NET Web Forms application.

So I wanted to quickly develop a pseudo-app that hooked into three different kinds of external data sources: a straight, public OData feed; a Windows Azure DataMarket OData feed; and a cloud -- in this case SQL Azure-hosted -- database. (Yes, the archaic local database connection is dying fast.)

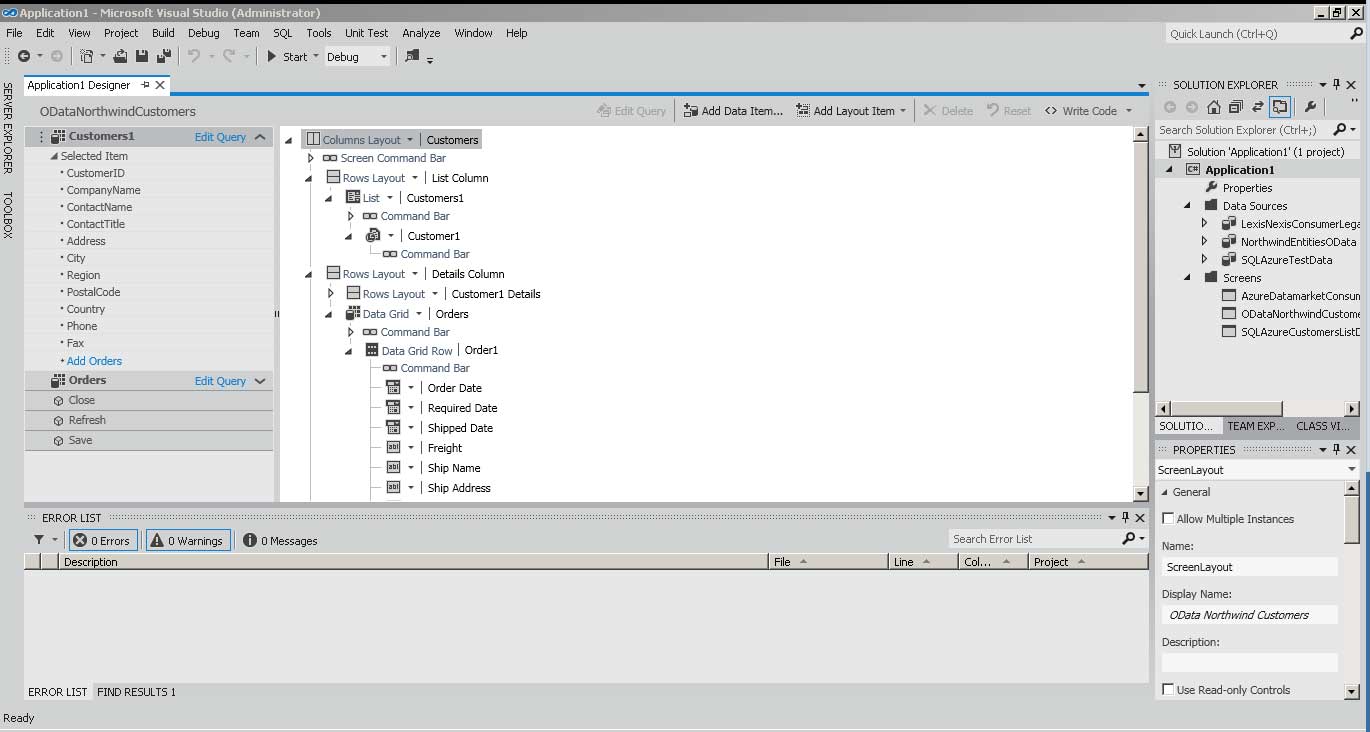

Downloading the Visual Studio 11 Beta was by far the most time-consuming part of my experiment, taking more than a couple of hours. There's been a lot of user discussion about the new look and feel of Visual Studio, with less chrome, less color, fewer doo-dads and so on. I can't say I like it that much, but I've always been a function-over-form guy. I've never really understood why people cared so much about the minor design aspects of an app. If it works and the UI doesn't get in the way, I'm fine with it. I couldn't care less how colorful it is. And as you can see in Fig. 1, there ain't much color here.

|

Figure 1. Plain-looking interface for Visual Studio 11, but it's also a beta. (Click image to view larger version.) |

Installation was uneventful and v11 installed side-by-side with v10. Though some haven't been that lucky.

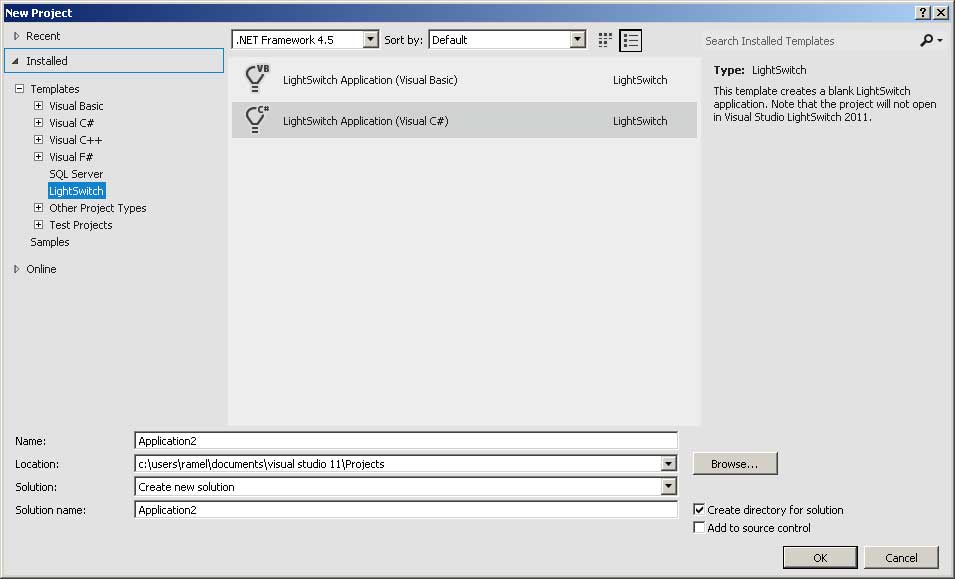

After the lengthy download and setup, cranking up Visual Studio 11 and starting a new project shows that LightSwitch is prominent in your project type choices (see Fig. 2).

|

Figure 2. LightSwitch is flipped on by default. (Click image to view larger version.) |

After your new project is set up, you're told to "Start with data," with the option of creating a new table locally or attaching to an external data source, which brings up a wizard that gives you choices of Database, SharePoint, OData Service or WCF RIA Service. I started with OData. I plugged in the source address of my OData feed (for example, the public Northwind test database feed address is: http://services.odata.org/Northwind/Northwind.svc/) and then chose which login information to use: None, Windows Credentials or Other Credentials, the latter taking a user name and password. This public Northwind feed doesn't require any login info. I then tested my connection and proceeded to choose which entities I wanted to import and named my data source. Upon clicking Finish I got several warnings (such as the one in Fig. 3) that certain features of the data source weren't available, specifically "many-to-many" relationships:

The relationship between CustomerDemographics and Customers will not be imported. Many-to-Many relationships are not supported.

I was then presented with an Application Designer you can use to add queries or "screens" that are the main UI features of LightSwitch applications. For example, you could add a query (with wizard options -- no coding required) to just find customers in certain geographical regions, and then add a screen to present that list. Or you could just quickly add a screen to present a full table. Many other options are available, of course, but I just wanted a quick-and-dirty screen to prove it works.

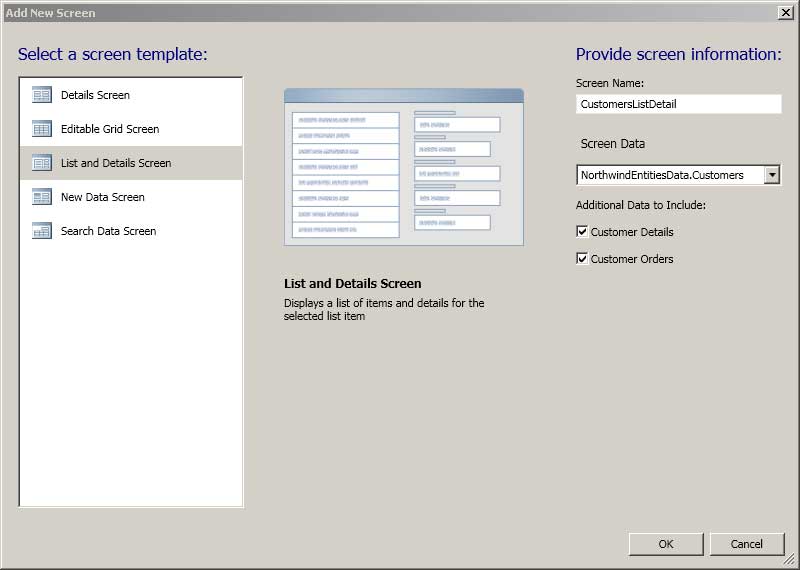

So bringing the Customer table to the designer let me choose the type of screen template and the data I wanted to include (see Fig. 3).

|

Figure 3. Getting down and dirty with the Application Designer. (Click image to view larger version.) |

I then clicked OK and I was ready to test the app. Clicking Start loaded a bunch of symbols and eventually started the app. And there was my screen, showing ALFKI and rest of the familiar cast of Northwind customers. It was that easy. It took much less time to do it than explain it.

For SQL Azure, of course, you have to first sign up (I used the three-month trial) for a Windows Azure account and set up a SQL Azure database. By the way, migrating a database to SQL Azure still seems kind of problematic, judging from my own experience and that of other bloggers on the Web. There are various ways to do that, but the migration wizard I used previously didn't work for me this time. After much trial and tribulation, I ended up connecting to a SQL Server 2008 R2 database on my machine via SQL Server Management Studio and using the scripting function to write a migration script that actually worked fine.

To connect to my Northwind (yes, them again) SQL Azure database, I right-clicked on the project in Solution Explorer and chose Add Data Source, which brought up the familiar list of data source types. For SQL Azure, I chose Database and plugged in the server name in the Connection Properties box that popped up. This server name looks like this: lfi6gi15xg.database.windows.net (I scrambled that mix of initial letters, so this isn't my real server name).

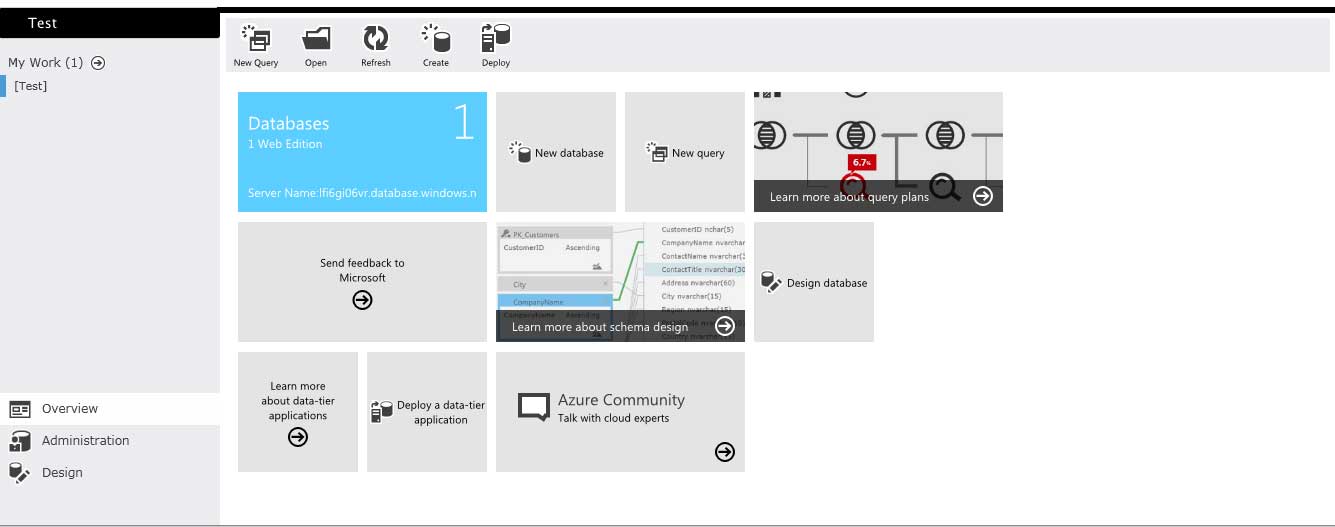

I then chose Use SQL Server Authentication and supplied the user name and password, which you can get from your SQL Server management portal. By the way, check out the new Metro styling of the portal in Fig. 4.

|

Figure 4. Check out the Metro interface. (Click image to view larger version.) |

I provided the name of the database (it looks up the choices for you on the server you specified), conducted a successful connection test and moved on to the Attach Data Source Wizard where I chose which database objects to import (for the OData feed, remember, it was "entities" instead of "database objects") and name the data source, etc., as was done previously. From there, everything worked as before with the public OData feed.

So far, connecting to and using a public OData feed and my own private SQL Azure database worked flawlessly.

My last test scenario was a DataMarket OData feed. You can sign up to subscribe to a DataMarket feed with a Windows Live ID. I chose one of dozens of free subscriptions available, but there are also dozens of paid and free trial feeds, also. The DataMarket management portal provides you with account details such as a Primary Account Key and Customer ID. It also lets you "explore" datasets, so you can run sample queries, browse data, etc. This is where you get the Service root URL you need to connect with LightSwitch. For example, I subscribed to the free Consumer Legal Articles feed from Lawyers.com, which has a URL of: https://api.datamarket.azure.com/LexisNexis/ConsumerLegalArticles/.

Back in LightSwitch, I went to add another data source, chose OData Service as the type, plugged in the aforementioned Service root URL and chose Other Credentials, using my aforementioned Customer ID as the User name and Primary Account Key as the password. Upon a successful connection test, I proceeded as before.

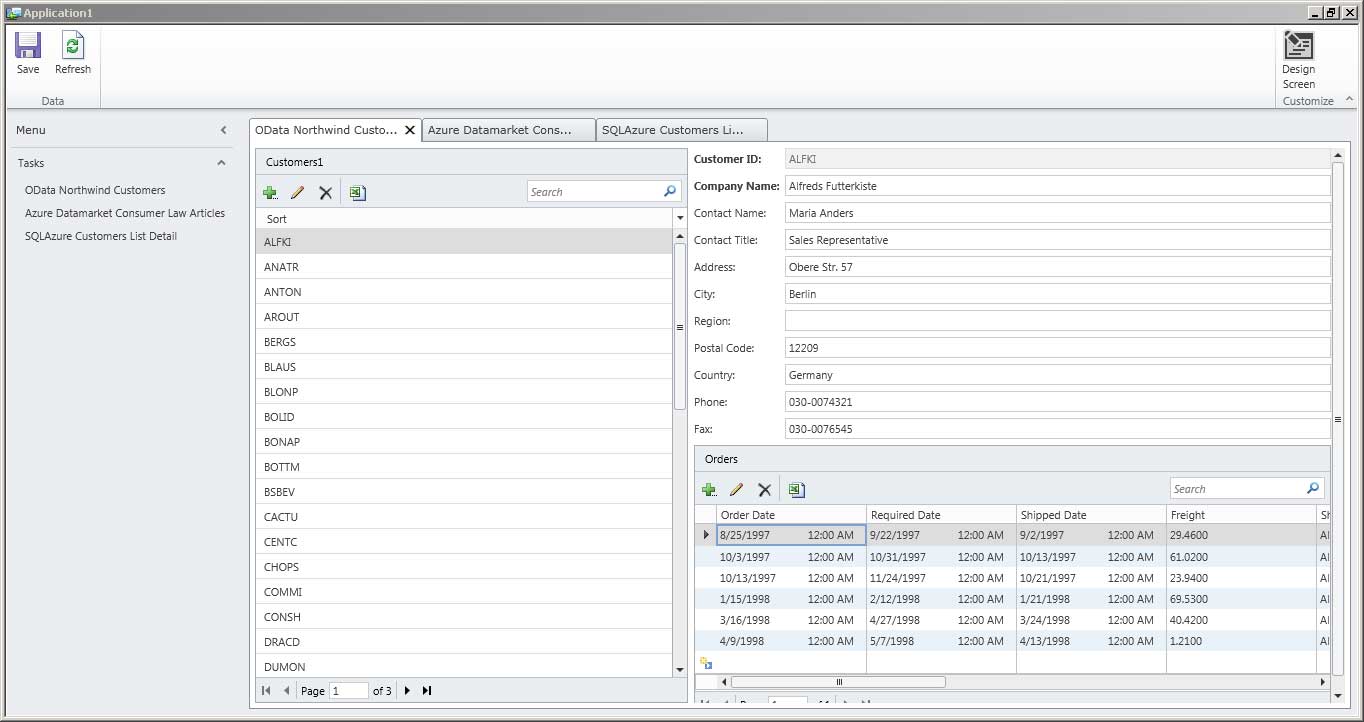

And it worked! My first LightSwitch in Visual Studio 11 Beta was up and running, connecting to three different external data sources and showing screens that displayed data from each (see Fig. 5).

|

Figure 5. Ta-da! LightSwitch works as advertised, showing how my application will look whether it's on the user's desktop, on a Web page, or somewhere up there in the cloud. (Click image to view larger version.) |

That's it. Thumbs up! The new LightSwitch in Visual Studio 11 Beta works as advertised, giving you "a simpler and faster way to create professional-quality business applications for the desktop, the Web and the cloud," as Microsoft said on its LightSwitch 2011 trial page, which you can download if you don't want to bother with downloading and installing one of the more full-featured Visual Studio 11 Beta editions.

What do you think of the new Visual Studio and LightSwitch? Did you manage to say Windows Azure Marketplace DataMarket three times fast without spitting all over yourself? Comment here or drop me a line.

Posted by David Ramel on 04/03/20128 comments

Microsoft yesterday released the final JDBC Driver 4.0 for SQL Server after three community technology previews, continuing the effort to open its technology to better accommodate programmers using PHP, ODBC and even Hadoop, among others.

The driver provides access to SQL Server editions going back to SQL Server 2005 -- and SQL Azure -- from any Java application or applet. The Type 4 JDBC driver provides connectivity via standard JDBC APIs in Java SE 5 and Java SE 6.

Program manager Shamitha Reddy announced the release in a blog post, highlighting four new features:

- Pure Java Kerberos -- Kerberos was added to the list of authentication options for non-Windows users.

- Always On -- Instead of database mirroring, users can choose to use Always On Availability Groups, explained here.

- Correlated Tracing with XEvents -- The SQL Server Extended Event general event-handling system that correlates data from different sources sports a new UI and lets users track driver-related actions.

- FormatID change in XA Transactions -- The new driver supports Java Platform, Enterprise Edition/JDBC 2.0 optional distributed transactions, sometimes called "eXtended Architecture" transactions. Responding to customer feedback, Microsoft changed the way the FormatID field is used so it can be used in all XA transactions, Reddy said.

Other enhancement listed by Reddy include better cloud (SQL Azure) support and support for UTF-16 Unicode character encoding and also for sparse columns ("ordinary columns that have an optimized storage for null values," according to MSDN), along with bug fixes. You can get it here.

What do you think about Microsoft's commitment to interoperability? Comment here or drop me a line.

Posted by David Ramel on 03/07/20120 comments

"I have seen simple select statements [in Entity Framework] with 4 or 5 includes result in nearly 5,000 line SQL statements when an equivalent hand-written SQL statement is [about] 15 lines."

So reads the first of 21 comments on Microsoft's "ADO.NET Entity Framework (EF) Feature Suggestions" site, where developers can post, vote and comment on proposed EF enhancements. "Improved SQL Generation" is by far the No. 1 feature suggestion, with some 1,400 votes.

Several developer complaints about SQL generation concerned bloated code or slow performance. You saw an example of the former; the latter is exemplified by this comment:

"I just documented a case where the Contains() operator reduces EF query performance by a factor of 300. Further diagnosis revealed that the slowdown occurs in the query-generation portion of the request, so I agree that this needs some serious attention."

Microsoft's Diego Vega, program manager for DataFx, responded that the core EF library update coming in .NET 4.5 will provide better SQL generation in some cases, but not for all of the listed scenarios. "I am going to leave this idea as open for now, until we figure out a better way to track individual scenarios, which we need to do to get more actionable data," Vega said.

Speaking of .NET 4.5 and EF core libraries, some comments concerned the attempted separation of EF releases from .NET. The EF team shipped the first two releases as part of the .NET Framework, but then released separate updates to get them out to developers faster, as mentioned in this October blog post. That post said the June 2011 CTP was the first attempted full EF shipment separate from the .NET Framework. But technical problems resulted, so several feature improvements -- such as support for enums, spatial types and others -- that rely on .NET core library updates will have to wait for next EF preview that will ship along with the upcoming public preview of .NET 4.5.

When Vega reiterated this on the feature request page, a couple of readers found fault with that, as evidenced by this comment:

"Diego, No offense but moving to v4.5 of the framework is really not an option for ... most corporate software."

And this:

"I guess because EF core library is included in .NET framework we should wait until then! That is awful, please consider put EF libraries out of .NET framework. Good ORM needs frequent release cycle."

Umm ... isn't that what the team said they were trying to do back in October?

Why, yes, replied Vega in a response to the two reader comments that were posted earlier this month:

"We have indeed looked at taking the whole of EF out of .NET Framework for this same reason. The June 2011 CTP of EF was a first attempt that showed us it is going to be harder than we thought because of the impact on existing applications, partner teams and ADO.NET provider writers."

You can read more about the long, strange trip to EF 5.0 here and bone up on coming EF 5.0 improvements here.

Besides improved SQL generation, the top five feature requests include "Batch CUD Support (1,179 votes); "EF Support for Second Level Cache" (655 votes); "Support for multiple databases" (595 votes); and "Entity Designer: Speed up & Optimize for using with 200+ entities" (524 votes).

What feature requests would you like to see in EF? Comment here or drop me a line.

Posted by David Ramel on 02/27/20126 comments

The Microsoft ADO.NET team today announced that the upcoming Entity Framework 5.0 could boost application performance by some 67 percent over EF 4.0.

Reducing data access overhead in the O/RM and other performance tweaks resulted in one internal test that showed "repeat execution time of the same LINQ query has been reduced by around 6x," the team said in a blog post.

One improvement involves automatically compiling LINQ to Entities queries. Developers have long been able to compile such queries explicitly via a CompiledQuery.Compile method call, but apparently they weren't aware of the feature or found the API more difficult to work with than regular LINQ, the team said. (You can read about the nuts and bolts of automatic compilation here.) Now the translation of inline LINQ queries is automatically cached and CompiledQuery is no longer necessary.

In comparison tests of EF 4.0 and EF 5.0 involving a repeated query executed with ADO.NET, LINQ to SQL and various EF methodologies, "we've improved the performance of LINQ to Entities queries nearly 600%," the team said.

Of course, you shouldn't expect that kind of improvement in your real-world applications. "It would be amazing if we could give you 600% improved performance across the board," the team said. "Unfortunately, most applications do more interesting things than run the same query repeatedly."

However, a suite of tests simulating typical usage in real-world apps showed a 67 percent performance increase after the server was upgraded from EF 4.0 to EF 5.0. "These numbers are based on an internal build, but we're working hard to get a build available for public verification of these improvements," the team said.

Just upgrading to .NET 4.5 will provide these performance improvements to EF 4.0 apps. The ADO.NET team has stated it wants to start shipping EF versions separately from the .NET Framework, but there were some upgrade issues that required core library updates, so some EF improvements have to wait for .NET 4.5. "This includes support for Enum Types, Spatial Types, Table-Valued Functions, Stored Procedures with Multiple Results and Auto-Compiled LINQ Queries," an earlier blog post said.

Also, last week the final go-live release of EF 4.3 was announced, including improvements to Code First migrations, some bug fixes and several other tweaks.

The ADO.NET team blog post about EF 5.0 improvements is brand-new at the time I write this, so no readers have weighed in with comments yet, but I'll keep an eye out for any developer concerns or interesting feedback. In the meantime, you can comment here or drop me an e-mail.

Posted by David Ramel on 02/14/20121 comments