News

Microsoft Open Sources 'ONNX Script' for Python Machine Learning Models

Microsoft has open sourced "ONNX Script," a library for authoring machine learning models in Python.

While Python has long been recognized as a go-to programming language for data science and is often used to author machine learning models, the new project focuses on clean, idiomatic Python syntax and composability through ONNX-native functions.

ONNX (Open Neural Network Exchange) is an open standard for machine learning interoperability, defining a common set of the building blocks of machine learning and deep learning models, called operators, along with a common file format to enable AI developers to use models with a variety of frameworks, tools, runtimes and compilers.

The ONNX Script project (housed on GitHub) seeks to help coders write ONNX machine learning models using a subset of Python regardless of their ONNX expertise, basically democratizing the approach.

"Prior to ONNX Script, authoring ONNX models required deep knowledge of the specification and serialization format itself," Microsoft said in an Aug. 1 announcement. "While eventually a more convenient helper API was introduced that largely abstracted the serialization format, it still required deep familiarity with ONNX constructs."

The ONNX Script site says it's intended to be:

- Expressive: enables the authoring of all ONNX functions.

- Simple and concise: function code is natural and simple.

- Debuggable: allows for eager-mode evaluation that enables debugging the code using standard python debuggers.

Specifically, the new ONNX Script approach involves two deep Python integrations:

- It provides a strongly typed API for all operators in ONNX (all 186 as of opset 19). This allows existing Python tooling, linters and integrated development environments (IDEs) to provide valuable feedback and enforce correctness.

- ONNX Script supports idiomatic Python language constructs to make authoring ONNX more natural, including support for conditionals and loops, binary and unary operators, subscripting, slicing and more. For example, the expression

a + b in Python would translate to the ONNX operator as Add(a, b).

Primary components used to do that include:

- A converter which translates a Python ONNX Script function into an ONNX graph, accomplished by traversing the Python Abstract Syntax Tree to build an ONNX graph equivalent of the function.

- A runtime shim that allows such functions to be evaluated (in an "eager mode"). This functionality currently relies on ONNX Runtime for executing ONNX ops and there is a Python-only reference runtime for ONNX underway that will also be supported.

- A converter that translates ONNX models and functions into ONNX Script. This capability can be used to fully round-trip ONNX Script ↔ ONNX graph.

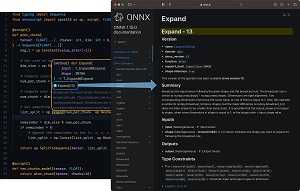

ONNX Script was reportedly designed with debuggability in mind and also features front-and-center IntelliSense support as shown in the graphic below, a screenshot of Visual Studio Code displaying a hover tooltip for the ONNX Expand operator with inline documentation linking to full online documentation.

[Click on image for larger view.] ONNX Script IntelliSense in Action (source: Microsoft).

[Click on image for larger view.] ONNX Script IntelliSense in Action (source: Microsoft).

Along with helping developers write ONNX machine learning models without being ONNX experts, Microsoft said the project also serves as the foundation for its new PyTorch ONNX exporter to support TorchDynamo, which it dubbed "the future of PyTorch." TorchDynamo is described as a Python-level JIT compiler designed to make unmodified PyTorch programs faster.

PyTorch, meanwhile, is an open source machine learning/deep learning framework based on the Torch library, used for applications such as computer vision and natural language processing, driven by Facebook's AI Research lab, according to Wikipedia.

Microsoft said it will publish more information about that PyTorch ONNX exporter as PyTorch 2.1 nears general availability, for now only noting that ONNX Script is pervasive throughout the company's renewed approach.

"Going forward, we envision ONNX Script as a means for defining and extending ONNX itself," Microsoft said. "New core operators and higher-order functions that are intended to become part of the ONNX standard could be authored in ONNX Script as well, reducing the time and effort it takes for the standard to evolve. We have proven this is a viable strategy by developing Torchlib, upon which the upcoming PyTorch Dynamo-based ONNX exporter is built.

"Over the coming months, we will also support converting ONNX into ONNX Script to enable seamless editing of existing models, which can play a key role in optimization passes, but also allow for maintaining and evolving ONNX models more naturally. We also intend to propose ONNX Script for inclusion directly within the ONNX GitHub organization soon, under the Linux Foundation umbrella."

About the Author

David Ramel is an editor and writer at Converge 360.