In-Depth

The Cloud's The Limit with Serverless Architecture and Azure Functions

The cloud has enabled some incredible innovation, like serverless compute, which is transforming the way we build applications for the cloud. We dive into serverless concepts and explore how they are supported by Azure Functions.

- By Joseph Fultz, Darren Brust

- 07/17/2017

From tools to machines to computers, we look for ways to automate repetitive work and standardize the context in which we work so that we can focus on high-value specialized contributions to complete tasks and solve problems. In parallel, it's clear that as the IT industry has evolved, we've strived to achieve higher density at every level of a system, from the CPU to the server farm, in hopes of attaining the highest efficiency output from our systems. A serverless architecture is the point at which those two streams converge. It's the point at which an individual's effort is most granularly focused on the specific task and the waste in the system is at a minimum.

In a serverless world, developers create solutions instead of infrastructures and monitor execution and not environment health. Finance pays for time slices, not a mostly idle virtual machine (VM) farm. In Microsoft Azure, there are many consumable services that can be chained together to form an entire solution. A new key component of this is Azure Functions, which provides the serverless compute capability in a complete solution ecosystem. In this two-part article, we'll explore what it means to have a serverless architecture and explore Azure Functions tooling.

Serverless Architecture with Azure Functions

There are many definitions for serverless architecture. Despite the nomenclature, serverless architecture isn't code that runs without servers. In fact, servers are still very much required; you just don't have to think about them. You might think it's the next iteration of Platform as a Service (PaaS), and while close, it's not really that, either. So what, exactly, is it? Fundamentally, serverless architecture is the next evolution of cloud services, built on top of PaaS, abstracting away VMs, application frameworks, and external dependencies via bindings so that developers can focus simply on the code to implement business logic.

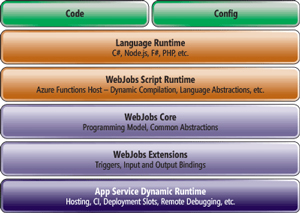

It's prudent to discuss the typical properties of Azure Functions and serverless architectures. Azure Functions provides the serverless compute component of a serverless architecture. As shown in Figure 1, Azure Functions builds on top of Azure App Service and the WebJobs SDK, adding a bit of extra magic to host and run the Azure Function code and provide some niceties such as runtime binding.

[Click on image for larger view.]

Figure 1: Azure Functions Architecture

[Click on image for larger view.]

Figure 1: Azure Functions Architecture

Thus, all the benefits you get by using Azure App Service are there and can be found just below the services in the settings. Additionally, it also means that the WebJobs SDK can be used locally to create a local runtime environment. While not an architectural benefit, Azure Functions is a polyglot platform that supports a variety of languages, including Node.js, C#, PhP, Python and even Windows PowerShell. Runtimes and dependencies are handled by the platform. This is an advantage for those working in mixed environments as it lets teams with differing preferences and skills leverage the same platform for building and delivering functionality. Using Azure Functions as the serverless compute component of a serverless architecture provides several key benefits:

- Reduced time to market: Because the underlying infrastructure is managed by the platform, developers are free to focus on application code that implements business logic. Azure Functions might be considered a further decomposition of microservices to nanoservices. Both paradigms provide a boon to the development process as development, testing and deployment activities are narrowly focused on a small bit of functionality that's managed separately from other related, but discrete services.

- Lower total cost of ownership: Because there's infrastructure or OSes to maintain, developers can focus on providing value to the business. Additionally, the investment needed for DevOps and maintenance is significantly simplified and reduced.

- Pay per execution: A key cost savings is typically found by only paying for the consumed cycles in conjunction with increasing the functional density of the compute resources used.

The Shift to Serverless Compute

By Yochay Kiriaty

Serverless compute represents a fundamental paradigm shift in how we think about cloud computing and building applications for the cloud. Serverless computing provides developers with a completely abstracted and infinitely scalable environment to execute their code and provides a payment model that is purely focused on code execution.

Serverless compute can be looked at as the next step in the evolution of Platform as a Service (PaaS). It fulfils the PaaS promise of abstracting the application infrastructure from the code and providing auto-scale capabilities. The major differentiator for serverless is the per-execution pricing model (rather than paying for the time the code is hosted) and instant, unlimited scale. Serverless compute provides a fully managed compute experience (zero administrative tasks), instant unlimited scale (zero scale configurations) and reacts to events (real-time processing). This enables developers to develop a new class of applications that scale by design and, ultimately, are more resilient.

Developers building serverless applications use serverless compute like Azure Functions, but also use an ever growing number of fully managed Azure or third-party services to compose working end-to-end serverless solutions. Developers today can easily set up and run complete serverless architectures that scale with ease, by design and at little cost. Developers also are free from the burden of managing and monitoring infrastructure; they can focus on their business logic and solve problems related to the business and not to the maintenance of the infrastructure running their code.

Serverless is here to change the way we think about building applications and is here to stay for a long time. Join the conversation at bit.ly/2hhP41O and at bit.ly/2gcbPzr. Submit feature requests to bit.ly/2hhY3jq and reach out to us on Twitter: @Azure with the hashtag #AzureFunctions.

Yochay Kiriaty is a principal program manager on the Microsoft Azure team, specifically driving Web, mobile, API and functions experiences as part of the Azure App Service Platform. Kiriaty has been working with Web technologies since the late 90s and has a passion for scale and performance. You can contact him at [email protected] and follow him on Twitter: @yochayk.

As for your approach when building using Azure Functions, a key to realizing the full value is to write only the smallest unit of logic to do a single scope of work and to keep the dependencies to a minimum. When working with Azure Functions, it's best to keep to these practices:

- An Azure Function should be single purpose in nature. Think of them as short, concise statements rather than compound sentences.

- Operations are idempotent in nature. That means that the resulting state of the system from a call to an API endpoint will be unchanged if called subsequent times with the same parameters.

- Keep execution times brief. The goal should be to receive input, perform the desired operations and get the results to the downstream consumers. For long-running processes, you might consider Azure WebJobs or even hosting the service in Azure Service Fabric.

- In an attempt to keep the overall complexity low and execution quick, it's best to minimize internal dependencies. Adding too much runtime weight will slow initial load times and add complexity to the system.

- External integration through input and out bindings. Some common guidance given for high-performance sites is to write stateless services. This helps by not complicating or slowing a service to keep, serialize, and deserialize runtime state, as well as simplifying debugging efforts because you don't have to discover and attempt to reproduce state to figure out what happened; in stateless it's just a matter of passing the parameter values back through.

Properties of serverless architecture include the following:

- The unit of work in a serverless architecture takes the form of a stateless function invoked by events.

- Scaling, capacity and infrastructure management is provided as a service.

- Execution-based billing model where you only pay for the time your code is running.

Some challenges of serverless architecture include the following:

- Complexity: While serverless architecture simplifies many things for the developer, the platform abstractions require you to rethink the way in which you build applications. Managing a monolithic application as a single unit is more straightforward than managing a fleet of purpose-built functions and the dependencies between them. This is where capabilities such as those provided by Azure API Management come into play, giving you a way to create a consistent outward facing namespace that tie together all of the discretely defined and managed functions.

- Tooling: Being relatively new, the tools to write, debug and test functions are still under development. As of this writing, they're currently in preview, but Visual Studio 2017 will have the tooling built-in. Also, management and monitoring tools are still evolving, but there's a basic interface for Azure Functions where you can see requests, success, errors and request-response details. There's plenty of work to do when it comes to tying in the platform with application monitoring tools and the Azure Functions team is working to grow and evolve such support.

- Organizational Support: It's a non-trivial consideration for some to move to a serverless paradigm. Many organizations are challenged to move to fully automated continuous integration (CI)/continuous delivery (CD) pipeline and microservices architecture. The move to a serverless design can add to those difficulties as it'll often challenge current standards and require educating resources on what's available, how to tie it together and how to manage it.

- No Runtime Optimization: In a traditional design, you might optimize the execution environment to the workload, changing the type and amount for things such as RAM, swap, disk and network connectivity. With Azure Functions, only minimal changes can be made, such as which storage account to use.

Traditional Architecture vs. Serverless Architecture

A typical set of design artifacts for a system includes a logical design, technical design and software architecture. The logical design typically defines the capabilities of a system and what it does, whereas the technical design typically defines what the system is. As you move to a serverless architecture, you become more concerned about what a system does more so than what it is. In many IT shops you might find more architecture diagrams that look something like Figure 2.

[Click on image for larger view.]

Figure 2: Traditional Technical Architecture

[Click on image for larger view.]

Figure 2: Traditional Technical Architecture

This type of artifact is particularly important to the folks in hosting, networking, and the DBA group as they need to know what to provision and configure. However, in a Platform-as-a-Service (PaaS) environment, you're focused on the functional properties and you leave provisioning details to the platform having only to define the configuration of the PaaS services itself. The details about your input into the configuration will be kept in templates and all of the conversations you have are focused on capabilities, integration, and functionality instead of discussions about what are the optimal amounts in RAM, CPUs, and disk.

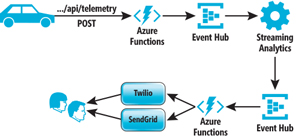

The result diagrams might be a bit closer to what you typically see in a logical diagram as is depicted in Figure 3. Such diagrams can start to focus on function parts and how they tie together, instead of the focus being on the configuration of application hosts. This simplification of message and clarification of purpose will help not only in technical discussions about intent and implementation, but will inevitably help convey the value of a system to a more non-technical office as the focus turns from what the system is to a discussion about what it does for someone.

[Click on image for larger view.]

Figure 3: Serverless Architecture

[Click on image for larger view.]

Figure 3: Serverless Architecture

So, What's Next?

As you can see, the Azure IoT platform provides a rich set of services to support the collection and analysis of data. Next time, we'll take an existing IoT implementation built to collect vehicle telemetry and store it in the cloud, and explore adding new capabilities. Stay tuned!

About the Authors

Joseph Fultz is a cloud solution architect at Microsoft. He works with Microsoft customers developing architectures for solving business problems leveraging Microsoft Azure. Formerly, Fultz was responsible for the development and architecture of GM's car-sharing program (mavendrive.com). Contact him on Twitter: @JosephRFultz or via e-mail at [email protected].

Darren Brust is a cloud solution architect at Microsoft where he spends most of his time assisting enterprise customers as they transition to Microsoft Azure. In his free time, you're likely to find him at one of his three children's sporting events or a local Austin coffee shop consuming copious amounts of coffee. You can reach him at [email protected] or on Twitter: @DarrenBrust.