The Data Science Lab

Multi-Class Classification Using PyTorch: Defining a Network

Dr. James McCaffrey of Microsoft Research explains how to define a network in installment No. 2 of his four-part series that will present a complete end-to-end production-quality example of multi-class classification using a PyTorch neural network.

The goal of a multi-class classification problem is to predict a value that can be one of three or more possible discrete values, such as "red," "yellow" or "green" for a traffic signal. This article is the second in a series of four articles that present a complete end-to-end production-quality example of multi-class classification using a PyTorch neural network. The example problem is to predict a college student's major ("finance," "geology" or "history") from their sex, number of units completed, home state and score on an admission test.

The process of creating a PyTorch neural network multi-class classifier consists of six steps:

- Prepare the training and test data

- Implement a Dataset object to serve up the data

- Design and implement a neural network

- Write code to train the network

- Write code to evaluate the model (the trained network)

- Write code to save and use the model to make predictions for new, previously unseen data

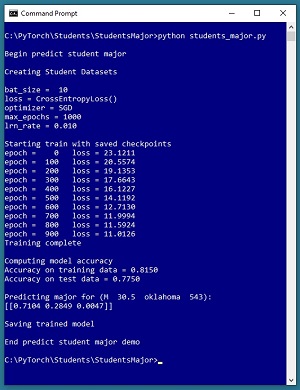

A good way to see where this series of articles is headed is to take a look at the screenshot of the demo program in Figure 1. The demo begins by creating Dataset and DataLoader objects which have been designed to work with the student data. Next, the demo creates a 6-(10-10)-3 deep neural network. The demo prepares training by setting up a loss function (cross entropy), a training optimizer function (stochastic gradient descent) and parameters for training (learning rate and max epochs).

[Click on image for larger view.] Figure 1: Predicting Student Major Multi-Class Classification in Action

[Click on image for larger view.] Figure 1: Predicting Student Major Multi-Class Classification in Action

The demo trains the neural network for 1,000 epochs in batches of 10 items. An epoch is one complete pass through the training data. The training data has 200 items, therefore, one training epoch consists of processing 20 batches of 10 training items.

During training, the demo computes and displays a measure of the current error (also called loss) every 100 epochs. Because error slowly decreases, it appears that training is succeeding. This is good because training failure is usually the norm rather than the exception. Behind the scenes, the demo program saves checkpoint information after every 100 epochs so that if the training machine crashes, training can be resumed without having to start from the beginning.

After training the network, the demo program computes the classification accuracy of the model on the training data (163 out of 200 correct = 81.50 percent) and on the test data (31 out of 40 correct = 77.50 percent). Because the two accuracy values are similar, it's likely that model overfitting has not occurred. After evaluating the trained model, the demo program saves the model using the state dictionary approach, which is the most common of three standard techniques.

The demo concludes by using the trained model to make a prediction. The raw input is (sex = "M", units = 30.5, state = "oklahoma", score = 543). The raw input is normalized and encoded as (sex = -1, units = 0.305, state = 0, 0, 1, score = 0.5430). The computed output vector is [0.7104, 0.2849, 0.0047]. These values represent the pseudo-probabilities of student majors "finance", "geology" and "history" respectively. Because the probability associated with "finance" is the largest, the predicted major is "finance."

This article assumes you have an intermediate or better familiarity with a C-family programming language, preferably Python, but doesn't assume you know very much about PyTorch. The complete source code for the demo program, and the two data files used, are available in the download that accompanies this article. All normal error checking code has been omitted to keep the main ideas as clear as possible.

To run the demo program, you must have Python and PyTorch installed on your machine. The demo programs were developed on Windows 10 using the Anaconda 2020.02 64-bit distribution (which contains Python 3.7.6) and PyTorch version 1.7.0 for CPU installed via pip. Installation is not trivial. You can find detailed step-by-step installation instructions for this configuration at my blog.

The Student Data

The raw Student data is synthetic and was generated programmatically. There are a total of 240 data items, divided into a 200-item training dataset and a 40-item test dataset. The raw data looks like:

M 39.5 oklahoma 512 geology

F 27.5 nebraska 286 history

M 22.0 maryland 335 finance

. . .

M 59.5 oklahoma 694 history

Each line of tab-delimited data represents a hypothetical student at a hypothetical college. The fields are sex, units-completed, home state, admission test score and major. The first four values on each line are the predictors (often called features in machine learning terminology) and the fifth value is the dependent value to predict (often called the class or the label). For simplicity, there are just three different home states, and three different majors.

The raw data was normalized by dividing all units-completed values by 100 and all test scores by 1000. Sex was encoded as "M" = -1, "F" = +1. The home states were one-hot encoded as "maryland" = (1, 0, 0), "nebraska" = (0, 1, 0), "oklahoma" = (0, 0, 1). The majors were ordinal encoded as "finance" = 0, "geology" = 1, "history" = 2. Ordinal encoding for the dependent variable, rather than one-hot encoding, is required for the neural network design presented in the article. The normalized and encoded data looks like:

-1 0.395 0 0 1 0.5120 1

1 0.275 0 1 0 0.2860 2

-1 0.220 1 0 0 0.3350 0

. . .

-1 0.595 0 0 1 0.6940 2

After the structure of the training and test files was established, I coded a PyTorch Dataset class to read data into memory and serve the data up in batches using a PyTorch DataLoader object. You can find the article that explains how to create Dataset objects and use them with DataLoader objects at my site, The Data Science Lab.

The Overall Program Structure

The overall structure of the PyTorch multi-class classification program, with a few minor edits to save space, is shown in Listing 1. I indent my Python programs using two spaces rather than the more common four spaces.

Listing 1: The Structure of the Demo Program

# student_major.py

# PyTorch 1.7.0-CPU Anaconda3-2020.02

# Python 3.7.6 Windows 10

import numpy as np

import time

import torch as T

device = T.device("cpu")

class StudentDataset(T.utils.data.Dataset):

# sex units state test_score major

# -1 0.395 0 0 1 0.5120 1

# 1 0.275 0 1 0 0.2860 2

# -1 0.220 1 0 0 0.3350 0

# sex: -1 = male, +1 = female

# state: maryland, nebraska, oklahoma

# major: finance, geology, history

def __init__(self, src_file, n_rows=None): . . .

def __len__(self): . . .

def __getitem__(self, idx): . . .

# ----------------------------------------------------

def accuracy(model, ds): . . .

# ----------------------------------------------------

class Net(T.nn.Module):

def __init__(self): . . .

def forward(self, x): . . .

# ----------------------------------------------------

def main():

# 0. get started

print("Begin predict student major ")

np.random.seed(1)

T.manual_seed(1)

# 1. create Dataset and DataLoader objects

# 2. create neural network

# 3. train network

# 4. evaluate model

# 5. save model

# 6. make a prediction

print("End predict student major demo ")

if __name__== "__main__":

main()

It's important to document the versions of Python and PyTorch being used because both systems are under continuous development. Dealing with versioning incompatibilities is a significant headache when working with PyTorch and is something you should not underestimate. The demo program imports the Python time module to timestamp saved checkpoints.

I prefer to use "T" as the top-level alias for the torch package. Most of my colleagues don't use a top-level alias and spell out "torch" dozens of times per program. Also, I use the full form of sub-packages rather than supplying aliases such as "import torch.nn.functional as functional." In my opinion, using the full form is easier to understand and less error-prone than using many aliases.

The demo program defines a program-scope CPU device object. I usually develop my PyTorch programs on a desktop CPU machine. After I get that version working, converting to a CUDA GPU system only requires changing the global device object to T.device("cuda") plus a minor amount of debugging.

The demo program defines just one helper method, accuracy(). All of the rest of the program control logic is contained in a main() function. It is possible to define other helper functions such as train_net(), evaluate_model(), and save_model(), but in my opinion this modularization approach makes the program more difficult to understand rather than easier to understand.

Defining a Neural Network for Multi-Class Classification

The first step when designing a PyTorch neural network class for multi-class classification is to determine its architecture. Neural architecture includes the number of input and output nodes, the number of hidden layers and the number of nodes in each hidden layer, the activation functions for the hidden and output layers, and the initialization algorithms for the hidden and output layer nodes.

The number of input nodes is determined by the number of predictor values (after normalization and encoding), six in the case of the Student data.

For a multi-class classifier, the number of output nodes is equal to the number of classes to predict. For the student data, there are three possible majors, so the neural network will have three output nodes. Notice that even though the majors are ordinal encoded -- so they are represented by just one value (0, 1 or 2) -- there are three output nodes, not one.

The demo network uses two hidden layers, each with 10 nodes, resulting in a 6-(10-10)-3 network. The number of hidden layers and the number of nodes in each layer are hyperparameters. Their values must be determined by trial and error guided by experience. The term "AutoML" is sometimes used for any system that programmatically, to some extent, tries to determine good hyperparameter values.

More hidden layers and more hidden nodes is not always better. The Universal Approximation Theorem (sometimes called the Cybenko Theorem) says, loosely, that for any neural architecture with multiple hidden layers, there is an equivalent architecture that has just one hidden layer. For example, a neural network that has two hidden layers with 5 nodes each, is roughly equivalent to a network that has one hidden layer with 25 nodes.

The definition of class Net is shown in Listing 2. In general, most of my colleagues and I use the term "network" or "net" to describe a neural network before it's been trained, and the term "model" to describe a neural network after it has been trained. However, the two terms are usually used interchangeably.

Listing 2: Multi-Class Neural Network Definition

class Net(T.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.hid1 = T.nn.Linear(6, 10) # 6-(10-10)-3

self.hid2 = T.nn.Linear(10, 10)

self.oupt = T.nn.Linear(10, 3)

T.nn.init.xavier_uniform_(self.hid1.weight)

T.nn.init.zeros_(self.hid1.bias)

T.nn.init.xavier_uniform_(self.hid2.weight)

T.nn.init.zeros_(self.hid2.bias)

T.nn.init.xavier_uniform_(self.oupt.weight)

T.nn.init.zeros_(self.oupt.bias)

def forward(self, x):

z = T.tanh(self.hid1(x))

z = T.tanh(self.hid2(z))

z = self.oupt(z) # no softmax: CrossEntropyLoss()

return z

The Net class inherits from torch.nn.Module which provides much of the complex behind-the-scenes functionality. The most common structure for a multi-class classification network is to define the network layers and their associated weights and biases in the __init__() method, and the input-output computations in the forward() method.

The __init__() Method

The __init__() method begins by defining the demo network's three layers of nodes:

def __init__(self):

super(Net, self).__init__()

self.hid1 = T.nn.Linear(6, 10) # 6-(10-10)-3

self.hid2 = T.nn.Linear(10, 10)

self.oupt = T.nn.Linear(10, 3)

The first statement invokes the __init__() constructor method of the Module class from which the Net class is derived. The next three statements define the two hidden layers and the single output layer. Notice that you don't explicitly define an input layer because no processing takes place on the input values.

The Linear() class defines a fully connected network layer. You can loosely think of each of the three layers as three standalone functions (they're actually class objects). Therefore the order in which you define the layers doesn't matter. In other words, defining the three layers in this order:

self.hid2 = T.nn.Linear(10, 10) # hidden 2

self.oupt = T.nn.Linear(10, 3) # output

self.hid1 = T.nn.Linear(6, 10) # hidden 1

has no effect on how the network computes its output. However, it makes sense to define the networks layers in the order in which they're used when computing an output value.

The demo program initializes the network's weights and biases like so:

T.nn.init.xavier_uniform_(self.hid1.weight)

T.nn.init.zeros_(self.hid1.bias)

T.nn.init.xavier_uniform_(self.hid2.weight)

T.nn.init.zeros_(self.hid2.bias)

T.nn.init.xavier_uniform_(self.oupt.weight)

T.nn.init.zeros_(self.oupt.bias)

If a neural network with one hidden layer has ni input nodes, nh hidden nodes, and no output nodes, there are (ni * nh) weights connecting the input nodes to the hidden nodes, and there are (nh * no) weights connecting the hidden nodes to the output nodes. Each hidden node and each output node has a special weight called a bias, so there'd be (nh + no) biases. For example, a 4-5-3 neural network has (4 * 5) + (5 * 3) = 35 weights and (5 + 3) = 8 biases. Therefore, the demo network has (4 * 8) + (8 * 8) + (8 * 1) = 104 weights and (8 + 8 + 1) = 17 biases.

Each layer has a set of weights which connect it to the previous layer. In other words, self.hid1.weight is a matrix of weights from the input nodes to the nodes in the hid1 layer, self.hid2.weight is a matrix of weights from the hid1 nodes to the hid2 nodes, and self.oupt.weight is a matrix of weights from the hid2 nodes to the output nodes.

PyTorch will automatically initialize weights and biases using a default mechanism. But it's good practice to explicitly initialize the values of a network's weights and biases, so that your results are reproducible. The demo uses xavier_uniform_() initialization on all weights, and it initializes all biases to 0. The xavier() initialization technique is called glorot() in some neural libraries, notably TensorFlow and Keras. Notice the trailing underscore character in the initializers' names. This indicates the initialization method modifies its weight matrix argument in place by reference, rather than as a return value.

PyTorch 1.7 supports a total of 13 different initialization functions, including uniform_(), normal_(), constant_() and dirac_(). For most multi-class classification problems, the uniform_() and xavier_uniform_() functions work well.

Based on my experience, for relatively small neural networks, in some cases plain uniform_() initialization works better than xavier_uniform_() initialization. The uniform_() function requires you to specify a range. For example, the statement:

T.nn.init.uniform_(self.hid1.weight, -0.05, +0.05)

would initialize the hid1 layer weights to random values between -0.05 and +0.05. Although the xavier_uniform_() function was designed for deep neural networks with many layers and many nodes, it usually works well with simple neural networks too, and it has the advantage of not requiring the two range parameters. This is because xavier_uniform_() computes the range values based on the number of nodes in the layer to which it is applied.

With a neural network defined as a class with no parameters as shown, you can instantiate a network object with a single statement:

net = Net().to(device)

Somewhat confusingly for PyTorch beginners, there is an entirely different approach you can use to define and instantiate a neural network. This approach uses the Sequential class to both define and create a network at the same time. This code creates a neural network that's almost the same as the demo network:

net = T.nn.Sequential(

T.nn.Linear(6,10),

T.nn.Tanh(),

T.nn.Linear(10,10),

T.nn.Tanh(),

T.nn.Linear(10,3),

).to(device)

Notice this approach doesn't use explicit weight and bias initialization so you'd be using whatever the current PyTorch version default initialization scheme is (default initialization has changed at least three times since the PyTorch 0.2 version). It is possible to explicitly apply weight and bias initialization to a Sequential network but the technique is a bit awkward.

When using the Sequential approach, you don't have to define a forward() method because one is automatically created for you. In almost all situations I prefer using the class definition approach over the Sequential technique. The class definition approach is lower level than the Sequential technique which gives you a bit more flexibility. Additionally, understanding the class definition approach is essential if you want to create complex neural architectures such as LSTMs, CNNs and Transformers.

The forward() Method

When using the class definition technique to define a neural network, you must define a forward() method that accepts input tensor(s) and computes output tensor(s). The demo program's forward() method is defined as:

def forward(self, x):

z = T.tanh(self.hid1(x))

z = T.tanh(self.hid2(z))

z = self.oupt(z) # no softmax: CrossEntropyLoss()

return z

The x parameter is a batch of one or more tensors. The x input is fed to the hid1 layer and then tanh() activation is applied and the result is returned as a new tensor z. The tanh() activation will coerce all hid1 layer node values to be between -1.0 and +1.0. Next, z is fed to the hid2 layer and tanh() is applied. Then the new z tensor is fed to the output layer and no explicit activation is applied.

This design assumes that the network will be trained using the CrossEntropyLoss() function. This function will automatically apply softmax() activation, in the form of a special LogSoftmax() function. In the early versions of PyTorch, for multi-class classification, you would use the NLLLoss() function ("negative log likelihood loss") for training and apply explicit log of softmax() activation on the output nodes. However, it turns out that it's more efficient to combine NLLLoss() and log of softmax() and so the CrossEntropyLoss() function was created to do just that.

A very common mistake is to use CrossEntropyLoss() but explicitly apply the softmax() function to the output nodes:

. . .

z = self.oupt(T.softmax(z)) # WRONG if using CrossEntropyLoss()

return z

This code would not generate a warning or an error, but network training would suffer because in effect you are applying softmax() twice -- once explicitly in the forward() method and once implicitly in the CrossEntropyLoss() method.

There are many possible activation functions you can use for the hidden layer nodes. For relatively shallow deep neural networks with four or fewer hidden layers, the tanh() function ("hyperbolic tangent") often works well. In the early days of neural networks, using the sigmoid() function ("logistic sigmoid") was common but tanh() usually works better. For deep neural networks with many hidden layers, the relu() function ("rectified linear unit") is often used. PyTorch 1.7 supports 28 different activation functions, but most of these are used only with specialized architectures. For example, the gelu() activation function ("Gaussian error linear unit") is sometimes used with Transformer architecture for natural language processing scenarios.

A mildly annoying characteristic of PyTorch is that there are often multiple variations of the same function. For example, there are at least three tanh() functions: torch.tanh(), torch.nn.Tanh(), and torch.nn.functional.tanh(). Multiple versions of functions exist mostly because PyTorch is an open source project and its code organization evolved somewhat organically over time. There is no good way to deal with the confusion of multiple versions of PyTorch functions. You just have to live with it.

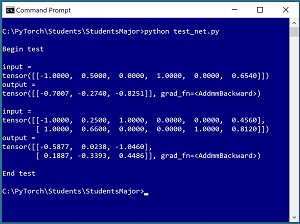

Testing the Network

It's good practice to test a neural network before trying to train it. The short program in Listing 3 shows an example. The test program instantiates a 6-(10-10)-3 neural network as described in this article and then feeds it an input corresponding to ("M", 50.0 units, "nebraska", 654 test score). The computed output values are (-0.7007, -0.2740, -0.8251). Notice that the raw outputs (often called logits) do not sum to 1 as they would if the softmax() function had been applied in the forward() method. Because the value at index [1], -0.2740, is the largest of the three values, the predicted student major is 1 = "geology." See the screenshot in Figure 2.

Listing 3: Testing the Network

# test_net.py

import torch as T

device = T.device("cpu")

class Net(T.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.hid1 = T.nn.Linear(6, 10) # 6-(10-10)-3

self.hid2 = T.nn.Linear(10, 10)

self.oupt = T.nn.Linear(10, 3)

T.nn.init.xavier_uniform_(self.hid1.weight)

T.nn.init.zeros_(self.hid1.bias)

T.nn.init.xavier_uniform_(self.hid2.weight)

T.nn.init.zeros_(self.hid2.bias)

T.nn.init.xavier_uniform_(self.oupt.weight)

T.nn.init.zeros_(self.oupt.bias)

def forward(self, x):

z = T.tanh(self.hid1(x))

z = T.tanh(self.hid2(z))

z = self.oupt(z) # assumes CrossEntropyLoss()

return z

print("\nBegin test ")

T.manual_seed(1)

net = Net().to(device)

# male, 50.0 units completed,

# nebraska, 654 test score

x = T.tensor([[-1, 0.500, 0,1,0, 0.6540]],

dtype=T.float32).to(device)

y = net(x)

print("\ninput = ")

print(x)

print("output = ")

print(y)

x = T.tensor([[-1, 0.250, 1,0,0, 0.4560],

[+1, 0.660, 0,0,1, 0.8120]],

dtype=T.float32).to(device)

y = net(x)

print("\ninput = ")

print(x)

print("output = ")

print(y)

print("\nEnd test ")

The three key statements in the test program are:

net = Net().to(device)

x = T.tensor([[-1, 0.500, 0,1,0, 0.6540]],

dtype=T.float32).to(device)

y = net(x)

The net object is instantiated as you might expect. Notice the input x is a 2-dimensional matrix (indicated by the double square brackets) rather than a 1-dimensional vector because the network is expecting a batch of items as input. You could verify this by setting up a second input like so:

x = T.tensor([[-1, 0.250, 1,0,0, 0.4560],

[+1, 0.660, 0,0,1, 0.8120]],

dtype=T.float32).to(device)

If you're an experienced programmer but new to PyTorch, the call to the neural network seems to make no sense at all. Where is the forward() method? Why does it look like the net object is being re-instantiated using the x tensor?

As it turns out, the net object inherits a special Python __call__() method from the torch.nn.Module class. Any object that has a __call__() method can invoke the method implicitly using simplified syntax of object(input). Additionally, if a PyTorch object which is derived from Module has a method named forward(), then the __call__() method calls the forward() method. To summarize, the statement y = net(x) invisibly calls the inherited __call__() method which in turn calls the program-defined forward() method. The implicit call mechanism may seem like a major hack but in fact there are good reasons for it.

You can verify the calling mechanism by running this code:

y = net(x)

y = net.forward(x) # same output

y = net.__call__(x) # same output

In non-exploration scenarios, you should not call a neural network using the __call__() or __forward__() methods because the implied call mechanism does necessary behind-the-scenes logging and other actions.

[Click on image for larger view.] Figure 2: Testing the Neural Network

[Click on image for larger view.] Figure 2: Testing the Neural Network

If you look at the screenshot in Figure 2, you'll notice that the first result is displayed as:

output =

tensor([[-0.7007, -0.2740, -0.8251]], grad_fn=<AddmmBackward>)

The grad_fn is the "gradient function" associated with the tensor. A gradient is needed by PyTorch for use in training. In fact, the ability of PyTorch to automatically compute gradients is arguably one of the library's two most important features (along with the ability to compute on GPU hardware). In the demo test program, no training is going on, so PyTorch doesn't need to maintain a gradient on the output tensor. You can optionally instruct PyTorch that no gradient is needed like so:

with T.no_grad():

y = net(x)

To summarize, when calling a PyTorch neural network to compute output during training, you should never use the no_grad() statement, but when not training, using the no_grad() statement is optional but more principled.

Wrapping Up

Defining a PyTorch neural network for multi-class classification is not trivial but the demo code presented in this article can serve as a template for most scenarios. In situations where a neural network model tends to overfit, you can use a technique called dropout. Model overfitting is characterized by a situation where model accuracy of the training data is good, but model accuracy on the test data is poor.

You can add a dropout layer after any hidden layer. For example, to add two dropout layers to the demo network, you could modify the __init__() method like so:

def __init__(self):

super(Net, self).__init__()

self.hid1 = T.nn.Linear(6, 10) # 6-(10-10)-3

self.drop1 = T.nn.Dropout(0.50)

self.hid2 = T.nn.Linear(10, 10)

self.drop2 = T.nn.Dropout(0.25)

self.oupt = T.nn.Linear(10, 3)

The first dropout layer will ignore 0.50 (half) of randomly selected nodes in the hid1 layer on each call to forward() during training. The second dropout layer will ignore 0.25 of randomly selected nodes in the hid2 layer during training. As I explained previously, these statements are class instantiations, not sequential calls of some sort, and so the statements could be defined in any order.

The forward() method would use the dropout layers like so:

def forward(self, x):

z = T.tanh(self.hid1(x))

z = self.drop1(z)

z = T.tanh(self.hid2(z))

z = self.drop2(z)

z = self.oupt(z)

return z

Using dropout introduces randomness into the training which tends to make the trained model more resilient to new, previously unseen inputs. Because dropout is intended to control model overfitting, in most situations you define a neural network without dropout, and then add dropout only if overfitting seems to be happening.