The Data Science Lab

Multi-Class Classification Using PyTorch: Model Accuracy

Dr. James McCaffrey of Microsoft Research continues his four-part series on multi-class classification, designed to predict a value that can be one of three or more possible discrete values, by explaining model accuracy.

The goal of a multi-class classification problem is to predict a value that can be one of three or more possible discrete values, for example "low," "medium" or "high" for a person's annual income. This article is the fourth in a series of four articles that present a complete end-to-end production-quality example of multi-class classification using a PyTorch neural network. The example problem is to predict a college student's major ("finance," "geology" or "history") from their sex, number of units completed, home state and score on an admission test.

The process of creating a PyTorch neural network multi-class classifier consists of six steps:

- Prepare the training and test data

- Implement a Dataset object to serve up the data

- Design and implement a neural network

- Write code to train the network

- Write code to evaluate the model (the trained network)

- Write code to save and use the model to make predictions for new, previously unseen data

Each of the six steps is complicated. And the six steps are tightly coupled which adds to the difficulty. This article covers the fifth and sixth steps -- using and saving a trained model.

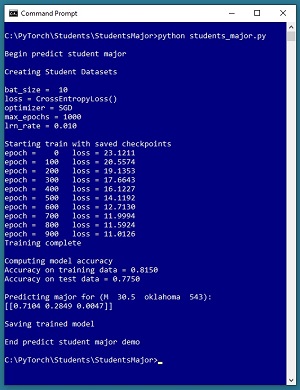

A good way to see where this series of articles is headed is to take a look at the screenshot of the demo program in Figure 1. The demo begins by creating Dataset and DataLoader objects which have been designed to work with the student data. Next, the demo creates a 6-(10-10)-3 deep neural network. The demo prepares training by setting up a loss function (cross entropy), a training optimizer function (stochastic gradient descent), and parameters for training (learning rate and max epochs).

[Click on image for larger view.] Figure 1: Predicting Student Major Multi-Class Classification in Action

[Click on image for larger view.] Figure 1: Predicting Student Major Multi-Class Classification in Action

The demo trains the neural network for 1,000 epochs in batches of 10 items. An epoch is one complete pass through the training data. The training data has 200 items, therefore, one training epoch consists of processing 20 batches of 10 training items.

During training, the demo computes and displays a measure of the current error (also called loss) every 100 epochs. Because error slowly decreases, it appears that training is succeeding. This is good because training failure is usually the norm rather than the exception. Behind the scenes, the demo program saves checkpoint information after every 100 epochs so that if the training machine crashes, training can be resumed without having to start from the beginning.

After training the network, the demo program computes the classification accuracy of the model on the training data (163 out of 200 correct = 81.50 percent) and on the test data (31 out of 40 correct = 77.50 percent). Because the two accuracy values are similar, it's likely that model overfitting has not occurred.

Next, the demo uses the trained model to make a prediction. The raw input is (sex = "M", units = 30.5, state = "oklahoma", score = 543). The raw input is normalized and encoded as (sex = -1, units = 0.305, state = 0, 0, 1, score = 0.5430). The computed output vector is [0.7104, 0.2849, 0.0047]. These values represent the pseudo-probabilities of student majors "finance," "geology" and "history" respectively. Because the probability associated with "finance" is the largest, the predicted major is "finance."

The demo concludes by saving the trained model using the state dictionary approach. This is the most common of three standard techniques.

This article assumes you have an intermediate or better familiarity with a C-family programming language, preferably Python, but doesn't assume you know very much about PyTorch. The complete source code for the demo program, and the two data files used, are available in the download that accompanies this article. All normal error checking code has been omitted to keep the main ideas as clear as possible.

To run the demo program, you must have Python and PyTorch installed on your machine. The demo programs were developed on Windows 10 using the Anaconda 2020.02 64-bit distribution (which contains Python 3.7.6) and PyTorch version 1.7.0 for CPU installed via pip. Installation is not trivial. You can find detailed step-by-step installation instructions for this configuration in my blog post.

The Student Data

The raw Student data is synthetic and was generated programmatically. There are a total of 240 data items, divided into a 200-item training dataset and a 40-item test dataset. The raw data looks like:

M 39.5 oklahoma 512 geology

F 27.5 nebraska 286 history

M 22.0 maryland 335 finance

. . .

M 59.5 oklahoma 694 history

Each line of tab-delimited data represents a hypothetical student at a hypothetical college. The fields are sex, units-completed, home state, admission test score and major. The first four values on each line are the predictors (often called features in machine learning terminology) and the fifth value is the dependent value to predict (often called the class or the label). For simplicity, there are just three different home states, and three different majors.

The raw data was normalized by dividing all units-completed values by 100 and all test scores by 1000. Sex was encoded as "M" = -1, "F" = +1. The home states were one-hot encoded as "maryland" = (1, 0, 0), "nebraska" = (0, 1, 0), "oklahoma" = (0, 0, 1). The majors were ordinal encoded as "finance" = 0, "geology" = 1, "history" = 2. Ordinal encoding for the dependent variable, rather than one-hot encoding, is required for the neural network design presented in the article. The normalized and encoded data looks like:

-1 0.395 0 0 1 0.5120 1

1 0.275 0 1 0 0.2860 2

-1 0.220 1 0 0 0.3350 0

. . .

-1 0.595 0 0 1 0.6940 2

After the structure of the training and test files was established, I coded a PyTorch Dataset class to read data into memory and serve the data up in batches using a PyTorch DataLoader object. A Dataset class definition for the normalized encoded Student data is shown in Listing 1.

Listing 1: A Dataset Class for the Student Data

class StudentDataset(T.utils.data.Dataset):

def __init__(self, src_file, n_rows=None):

all_xy = np.loadtxt(src_file, max_rows=n_rows,

usecols=[0,1,2,3,4,5,6], delimiter="\t",

skiprows=0, comments="#", dtype=np.float32)

n = len(all_xy)

tmp_x = all_xy[0:n,0:6] # all rows, cols [0,5]

tmp_y = all_xy[0:n,6] # 1-D required

self.x_data = \

T.tensor(tmp_x, dtype=T.float32).to(device)

self.y_data = \

T.tensor(tmp_y, dtype=T.int64).to(device)

def __len__(self):

return len(self.x_data)

def __getitem__(self, idx):

preds = self.x_data[idx]

trgts = self.y_data[idx]

sample = {

'predictors' : preds,

'targets' : trgts

}

return sample

Preparing data and defining a PyTorch Dataset is not trivial. You can find the article that explains how to create Dataset objects and use them with DataLoader objects here.

The Neural Network Architecture

In a previous article in this series, I described how to design and implement a neural network for multi-class classification for the Student data. One possible definition is presented in Listing 2. The code defines a 6-(10-10)-3 neural network with tanh() activation on the hidden nodes.

Listing 2: A Neural Network for the Student Data

class Net(T.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.hid1 = T.nn.Linear(6, 10) # 6-(10-10)-3

self.hid2 = T.nn.Linear(10, 10)

self.oupt = T.nn.Linear(10, 3)

T.nn.init.xavier_uniform_(self.hid1.weight)

T.nn.init.zeros_(self.hid1.bias)

T.nn.init.xavier_uniform_(self.hid2.weight)

T.nn.init.zeros_(self.hid2.bias)

T.nn.init.xavier_uniform_(self.oupt.weight)

T.nn.init.zeros_(self.oupt.bias)

def forward(self, x):

z = T.tanh(self.hid1(x))

z = T.tanh(self.hid2(z))

z = self.oupt(z) # CrossEntropyLoss()

return z

If you are new to PyTorch, the number of design decisions for a neural network can seem intimidating. But with every program you write, you learn which design decisions are important and which don't affect the final prediction model very much, and the pieces of the puzzle ultimately fall into place.

The Overall Program Structure

The overall structure of the PyTorch multi-class classification program, with a few minor edits to save space, is shown in Listing 3. I indent my Python programs using two spaces rather than the more common four spaces.

Listing 3: The Structure of the Demo Program

# students_major.py

# PyTorch 1.7.0-CPU Anaconda3-2020.02

# Python 3.7.6 Windows 10

import numpy as np

import time

import torch as T

device = T.device("cpu")

class StudentDataset(T.utils.data.Dataset):

def __init__(self, src_file, n_rows=None): . . .

def __len__(self): . . .

def __getitem__(self, idx): . . .

# ----------------------------------------------------

def accuracy(model, ds): . . .

# ----------------------------------------------------

class Net(T.nn.Module):

def __init__(self): . . .

def forward(self, x): . . .

# ----------------------------------------------------

def main():

# 0. get started

print("Begin predict student major ")

np.random.seed(1)

T.manual_seed(1)

# 1. create Dataset and DataLoader objects

# 2. create neural network

# 3. train network

# 4. evaluate model

# 5. make a prediction

# 6. save model

print("End predict student major demo ")

if __name__== "__main__":

main()

It's important to document the versions of Python and PyTorch being used because both systems are under continuous development. Dealing with versioning incompatibilities is a significant headache when working with PyTorch and is something you should not underestimate.

I like to use "T" as the top-level alias for the torch package. Most of my colleagues don't use a top-level alias and spell out "torch" dozens of times per program. Also, I use the full form of sub-packages rather than supplying aliases such as "import torch.nn.functional as functional." In my opinion, using the full form is easier to understand and less error-prone than using many aliases.

The demo program defines a program-scope CPU device object. I usually develop my PyTorch programs on a desktop CPU machine. After I get that version working, converting to a CUDA GPU system only requires changing the global device object to T.device("cuda") plus a minor amount of debugging.

The demo program defines just one helper method, accuracy(). All of the rest of the program control logic is contained in a single main() function. It is possible to define other helper functions such as train_net(), evaluate_model(), and save_model(), but in my opinion this modularization approach unexpectedly makes the program more difficult to understand rather than easier to understand.

Saving Checkpoints

In almost all non-demo scenarios, it's a good idea to periodically save the state of the network during training so that if your training machine crashes, you can recover without having to start from scratch. The demo program shown running in Figure 1 saves checkpoints using these statements:

if epoch % 100 == 0:

dt = time.strftime("%Y_%m_%d-%H_%M_%S")

fn = ".\\Log\\" + str(dt) + str("-") + \

str(epoch) + "_checkpoint.pt"

info_dict = {

'epoch' : epoch,

'net_state' : net.state_dict(),

'optimizer_state' : optimizer.state_dict()

}

T.save(info_dict, fn)

A checkpoint is saved every 100 epochs. A file name that looks like "2021_01_25-10_32_57-900_checkpoint.pt" is created. The file name contains the date (January 25, 2021), time (10:32 and 57 seconds AM) and epoch (900). The network state information is stored in a Dictionary object. The code assumes that there is an existing directory named Log. You must save the network state and the optimizer state. You can optionally save other information such as the epoch, and the states of the NumPy and PyTorch random number generators.

If the training machine crashes, you can recover training with code like:

fn = ".\\Log\\2021_01_25-10_32_57-900_checkpoint.pt"

chkpt = T.load(fn)

net = Net().to(device)

net.load_state_dict(chkpt['net_state'])

optimizer.load_state_dict(chkpt['optimizer_state'])

. . .

epoch_saved = chkpt['epoch'] + 1

for epoch in range(epoch_saved, max_epochs):

T.manual_seed(1 + epoch)

# resume training as usual

If you want to recover training exactly as it would be if your machine hadn't crashed, which is usually the case, you must set the PyTorch random number generator seed value on each training epoch. This is necessary because DataLoader uses the PyTorch random number generator to serve up training items in a random order, and as of PyTorch version 1.7, there is no built-in way to save the state of a DataLoader object. If you don't set the PyTorch random seed in each epoch, you can recover from a crash. But the resulting training will be slightly different than if your machine had not crashed because the DataLoader will start using a different batch of training items.

Computing Model Accuracy

Computing the prediction accuracy of a trained binary classifier is relatively simple and you have many design alternatives. In high level pseudo-code, computing accuracy looks like:

loop thru each data item

get item predictor input values

get item target values (0 or 1 or 2 . . )

use inputs to compute output logit values

find index of largest output logit value

if index == target

correct prediction

else

wrong prediction

end-loop

return num correct / (num correct + num wrong)

The target values are 0 or 1 or 2, for "finance," "geology" or "history" majors. The computed output values, called logits, are stored in a tensor, such as (2.34, -1.09, 3.76). The index of largest value in the computed outputs is the predicted class.

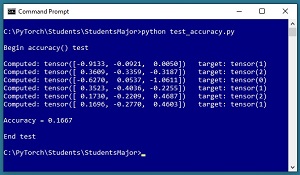

One of many possible implementations of an accuracy() function for the Student data, and a short program to test the function, is shown in Listing 4. The screenshot in Figure 2 shows the output from the test program. The first data item's target value is 1 and the computed output logit values are (-0.9133, -0.0921, 0.0050). The largest value is at index [2] so the prediction is wrong. Of the six items, only the fifth item is predicted correctly, which isn't unexpected because the network has not been trained.

Listing 4: A Model Accuracy Function

# test_accuracy.py

import numpy as np

import torch as T

device = T.device("cpu")

class StudentDataset(T.utils.data.Dataset): . . .

# see Listing 1

class Net(T.nn.Module): . . .

# see Listing 2

def accuracy(model, ds):

# assumes model.eval()

# granular but slow approach

n_correct = 0; n_wrong = 0

for i in range(len(ds)):

X = ds[i]['predictors']

Y = ds[i]['targets'] # [0] [1] or [2]

with T.no_grad():

oupt = model(X) # logits form

big_idx = T.argmax(oupt) # [0] [1] or [2]

if big_idx == Y:

n_correct += 1

else:

n_wrong += 1

acc = (n_correct * 1.0) / (n_correct + n_wrong)

return acc

print("\nBegin accuracy() test ")

T.manual_seed(1)

np.random.seed(1)

train_file = ".\\Data\\students_train.txt"

train_ds = StudentDataset(train_file, n_rows=6)

net = Net().to(device)

net.eval()

acc = accuracy(net, train_ds)

print("\nAccuracy = %0.4f" % acc)

print("\nEnd test ")

The accuracy() function is defined as an instance function so that it accepts a neural network to evaluate and a PyTorch Dataset object that has been designed to work with the network. The idea here is that you created a Dataset object to use for training, and so you can use the Dataset to compute accuracy too.

[Click on image for larger view.] Figure 2: Testing the Accuracy Function

[Click on image for larger view.] Figure 2: Testing the Accuracy Function

The accuracy() function iterates through the Dataset object to process data items one at a time:

for i in range(len(ds)):

X = ds[i]['predictors']

Y = ds[i]['targets'] # [0] [1] or [2]

with T.no_grad():

oupt = model(X) # logits form

. . .

Each item in a Dataset is a Dictionary object. Notice that the Dictionary keys of "predictors" and "targets" are magic strings. Most PyTorch programs are tightly coupled like this. An alternative, more complex approach, is to parameterize the keys.

When iterating through a Dataset object in this way, the X input tensor and the Y target tensor are both 1-dimensional vectors, which is fine even though during training the inputs, targets, and computed output objects are all 2-dimensional tensors. In other words, you can feed the Net object either a 2-dimensional tensor that holds multiple input items, or a 1-dimensional tensor that holds a single input item. Conceptually, this is similar to function overloading.

The output value is computed in a no_grad() block because there's no need for the computed output tensor to have a gradient since it isn't used for training. The predicted class label is compared with the target class label like so:

big_idx = T.argmax(oupt) # [0] [1] or [2]

if big_idx == Y:

n_correct += 1

else:

n_wrong += 1

Both big_idx and Y are PyTorch tensors that contain a single value of 0, 1 or 2. In early versions of PyTorch you would have to extract the scalar values using the item() function and compare them as "if big_idx.item() == Y.item()". But current versions of PyTorch allow you to directly compare tensors that have a single value.

The statements that call the accuracy function are:

net = Net().to(device) # create network

net.eval()

acc = accuracy(net, train_ds)

print("\nAccuracy = %0.4f" % acc)

The neural network to evaluate is placed into eval() mode. If a neural network has a dropout layer or a batch normalization layer, you must set the network to train() mode during training and to eval() mode at all other times. In the case of the demo program, the neural network doesn't use dropout or batch normalization so you can omit setting the mode entirely. But in my opinion, it's good practice to explicitly set train() and eval() mode even when it's not technically necessary.

The accuracy() function iterates through a Dataset object one item at a time so that you can examine each item. A less flexible but more efficient design is to compute accuracy on the entire Dataset using a set operation approach:

def accuracy_quick(model, dataset):

# assumes model.eval()

# en masse but quick

n = len(dataset)

X = dataset[0:n]['predictors'] # all X

Y = T.flatten(dataset[0:n]['targets']) # 1-D

with T.no_grad():

oupt = model(X)

arg_maxs = T.argmax(oupt, dim=1) # collapse cols

num_correct = T.sum(Y==arg_maxs)

acc = (num_correct * 1.0 / len(dataset))

return acc.item()

This approach is less clear but runs faster. The technique is useful when you have a large Dataset and you only want the final accuracy result, or when you are computing accuracy many times inside a loop.

Using a Trained Model

The demo program shown running in Figure 1 uses the trained model to make a prediction of new, previously unseen data. The input data is prepared like so:

net.eval()

print("Predicting for (M 30.5 oklahoma 543): ")

inpt = np.array([[-1, 0.305, 0,0,1, 0.543]],

dtype=np.float32)

inpt = T.tensor(inpt, dtype=T.float32).to(device)

The model is placed into eval() mode which is good practice even when not necessary (when the model doesn't use dropout or batch normalization) because the default is train() mode.

The demo input starts with NumPy data rather than a PyTorch tensor to illustrate the idea that in most cases input data is generated using Python rather than PyTorch. The input values are placed in a 2-dimensional matrix (indicated by the double square brackets) to illustrate the idea that you can feed a single input item or multiple input items to a trained model. The raw input values of ("M", 30.5 units, oklahoma, 543 score) are normalized and encoded to (-1, 0.305, 0,0,1, 0.543) in the same way that the training data was normalized and encoded.

Next, these statements make a prediction:

with T.no_grad():

logits = net(inpt) # values do not sum to 1.0

probs = T.softmax(logits, dim=1) # tensor

probs = probs.numpy() # numpy vector prints better

np.set_printoptions(precision=4, suppress=True)

print(probs)

The input tensor is fed to the Net object in a no_grad() block because gradients are only needed during training. The result value is a PyTorch tensor with three values (called logits) that don't necessarily sum to 1. The softmax() function converts the logits to three values that do sum to 1 so that they can be loosely interpreted as probabilities. The pseudo-probabilities are converted from PyTorch tensor to NumPy array because NumPy arrays can be printed nicely, and to illustrate the use of the numpy() function.

Saving a Trained Model

There are three main ways to save a PyTorch model to file: the older "full" technique, the newer "state_dict" technique, and the non-PyTorch ONNX technique. I recommend the "state_dict" technique which looks like:

print("Saving trained model state dict ")

path = ".\\Models\\student_sd_model.pth"

T.save(net.state_dict(), path)

This code assumes that a subdirectory named Models exists relative to the program. The code should be mostly self-explanatory. It is common to use a ".pth" extension for a saved PyTorch model but you can use whatever you wish.

To load and use a saved model from a different program, you could write code like:

model = Net().to(device)

path = ".\\Models\\student_sd_model.pth"

model.load_state_dict(T.load(path))

x = T.tensor([[-1, 0.305, 0,1,0, 0.543]],

dtype=T.float32)

with T.no_grad():

y = model(x)

print("Prediction is " + str(y))

Notice that to load a saved PyTorch model from a program, the model's class definition must be defined in the program. In other words, when you save a trained model, you save the weights and biases but you don't save the model's definition. This seems a bit odd to most people who are new to PyTorch and are expecting a save() method to save everything instead of just the weights and biases.

There is an older "full" or "complete" technique to save a PyTorch model, but its name is rather misleading because it works just like the newer state_dict technique in the sense that code that uses the saved model must have access to the model's class definition. Using the older save technique looks very much like the state_dict technique but the older technique has some minor underlying technical problems which is why the newer technique was created. There is no reason to use the older technique except for backward compatibility.

A third way to save a trained PyTorch model is to use ONNX (Open Neural Network Exchange) technology. You can save a model with code like:

path = ".\\Models\\student_onnx_model.onnx"

dummy = T.tensor([[1, 0.5, 1,0,0, 0.5]],

dtype=T.float32).to(device)

T.onnx.export(net, dummy, path,

input_names=["input1"],

output_names=["output1"])

However, you cannot load a saved ONNX model using PyTorch program. Instead, you must load the saved ONNX model and then use a special ONNX runtime. One example is:

import onnx

import onnxruntime

import numpy as np

path = ".\\Models\\student_onnx_model.onnx"

model = onnx.load(path)

onnx.checker.check_model(model)

sess = onnxruntime.InferenceSession(path)

x = T.tensor([[-1, 0.305, 0,1,0, 0.543]],

dtype=T.float32)

y = sess.run(None, {"input1": x})

print(y) # prediction

ONNX is still relatively immature so it's not fully supported by all neural network code libraries, and it has some bugs when using complex neural models. ONNX is useful when you want a system to use a trained model but you don't want to expose the underlying neural network class definition.

Wrapping Up

Learning how to create a PyTorch multi-class classifier usually can't be done in a strictly sequential manner. Based on my experience, most people need to use a spiral approach where they examine an overall program, then look at functional blocks of code such as defining the neural network and computing accuracy, and then examine the entire program again, and so on.

Creating and using neural networks using low-level code libraries such as PyTorch and TensorFlow gives you tremendous flexibility but is challenging. The difficulty of using TensorFlow led to the creation of the Keras library, which is essentially a high-level wrapper to make TensorFlow easier to use. I have seen several similar efforts to create a high-level wrapper library for PyTorch, but none of these wrapper libraries is being widely used at this time.