News

Microsoft Open Sources 'Copilot Chat' Sample App for Customized Chatbots

Microsoft open sourced a Copilot Chat sample app that organizations can use as a blueprint for their own customized chatbots based on advanced generative AI tech.

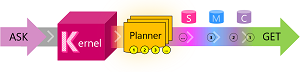

That tech is available because the sample app is based on Semantic Kernel, another Microsoft open source offering that helps developers integrate large language model (LLM) machine learning constructs into their apps, like the ones that power ChatGPT and Microsoft's "new Bing" search. Microsoft described Semantic Kernel as "a lightweight SDK that lets you mix conventional programming languages, like C# and Python, with the latest in Large Language Model (LLM) AI 'prompts' with prompt templating, chaining, and planning capabilities."

Semantic Kernel integration, along with Azure cloud services, helps enterprise developers create modern chatbots with advanced functionality including natural language processing, voice commands enabled via speech recognition, and file uploading.

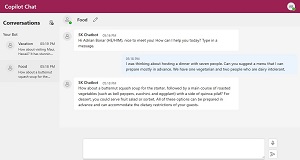

[Click on image for larger view.] Copilot Chat (source: Microsoft).

[Click on image for larger view.] Copilot Chat (source: Microsoft).

"By leveraging LLM-based AI, you can make the chat smarter with your own up-to-date information through the Semantic Kernel," Microsoft said in a May 1 announcement. "Copilot Chat also offers scalability, increased efficiency, and personalized recommendations, making it the perfect addition to any enterprise. Best of all, it's an open-source sample app, meaning you can start developing your custom chatbot today!"

[Click on image for larger view.] Semantic Kernel (source: Microsoft).

[Click on image for larger view.] Semantic Kernel (source: Microsoft).

Primary benefits, Microsoft said, include:

- Improved User Experience: By providing personalized assistance and natural language processing, your own chatbot can improve the user experience for customers, students, and employees alike. Users can get the information they need quickly and easily, without having to navigate complex websites or wait for assistance from a customer service representative.

- Increased Efficiency: With a chatbot handling customer service or HR tasks, you can free up employees to focus on more complex tasks that require human intervention. This can increase efficiency and reduce costs for your organization.

- Personalized Recommendations: With natural language processing and a persistent memory store, your chatbot can make personalized recommendations for products, services, or educational resources. This can increase customer satisfaction and drive sales.

- Improved Accessibility: With speech recognition and file uploading, your chatbot can provide more accurate and personalized assistance to users. For example, patients who have difficulty navigating a website can use the chat more easily and receive the information they need quickly and efficiently.

- Scalability: With a chatbot handling customer service or educational tasks, you can easily scale up to meet increasing demand without having to hire more staff. This can reduce costs and increase revenue.

Using the sample app to create a customized enterprise chatbot involves a lot of moving parts, requiring a setup with .NET 6.0 SDK, Node.js, Yarn, Visual Studio Code (optional) and an Azure OpenAI resource or an account with OpenAI.

Once all that is in place, the app's repo provides instructions on how to build and run a WebApi back-end server and an accompanying front-end application, including how to set up the optional extras to enable Azure Speech Recognition and Persistent Memory Store.

To help with all that, the announcement post includes a 15-minute video that walks developers through the requisite steps.

Note that the Semantic Kernel Copilot Chat app is different from GitHub Copilot Chat for Visual Studio 2022, which in March was announced as a private preview. The "Copilot" moniker was borrowed from GitHub by its corporate owner, Microsoft, which has used the term to announce advanced AI integration into all kinds of products and services.

About the Author

David Ramel is an editor and writer at Converge 360.