News

Build 2023 Dev Conference to Detail Semantic Kernel (AI LLM Integration)

Amid daily buzz about advanced AI, Microsoft is devoting two sessions at next week's Build 2023 developer conference to its open source Semantic Kernel offering, which helps developers use AI large language models (LLMs) in their apps.

LLMs were popularized after last year's introduction of ChatGPT, the sentient-sounding chatbot based on the GPT (generative pre-trained transformer) series of LLMs from Microsoft partner OpenAI.

Microsoft in March unveiled Semantic Kernel, an open sourced internal incubation project that provides an SDK to help developers mix conventional programming languages with the latest in LLM prompts (see the Visual Studio Magazine article, Microsoft Open Sources Tool for GPT-4-Infused Apps).

[Click on image for larger view.] Semantic Kernel (source: Microsoft).

[Click on image for larger view.] Semantic Kernel (source: Microsoft).

The SDK provides templating, chaining and planning capabilities for creating AI-first apps faster and easier, according to Microsoft, letting them more easily leverage LLMs like GPT-4, the latest, most advanced LLM from OpenAI.

"The SK extensible programming model combines natural language semantic functions, traditional code native functions, and embeddings-based memory unlocking new potential and adding value to applications with AI," the project's GitHub repo states. "SK supports prompt templating, function chaining, vectorized memory, and intelligent planning capabilities out of the box."

Prompt templating is related to the emerging discipline of "prompt engineering" (paying up to $335,000/year) that seeks to help users get the most useful responses from LLMs by querying them with special best-practice techniques.

[Click on image for larger view.] A Semantic Kernel ASK (source: Microsoft).

[Click on image for larger view.] A Semantic Kernel ASK (source: Microsoft).

Better prompting is one of the four key benefits of Semantic Kernel as listed by Microsoft (see the article, "Microsoft Pushes Open Source 'Semantic Kernel' for AI LLM-Backed Apps"):

- Fast integration: SK is designed to be embedded in any kind of application, making it easy for you to test and get running with LLM AI.

- Extensibility: With SK, you can connect with external data sources and services -- giving their apps the ability to use natural language processing in conjunction with live information.

-

Better prompting: SK's templated prompts let you quickly design semantic functions with useful abstractions and machinery to unlock LLM AI's potential.

- Novel-But-Familiar: Native code is always available to you as a first-class partner on your prompt engineering quest. You get the best of both worlds.

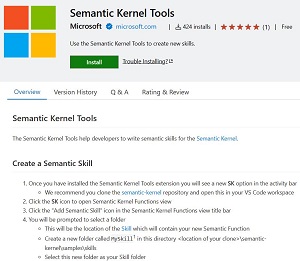

Just last month, Microsoft announced new developments for Semantic Kernel (see the article, "Semantic Kernel (AI LLM Integration) Gets VS Code Tools, Python Support"). Those developments included a VS Code extension available in the VS Code marketplace designed to help developers write skills for Semantic Kernel.

[Click on image for larger view.] Semantic Kernel Tools (source: Microsoft).

[Click on image for larger view.] Semantic Kernel Tools (source: Microsoft).

Now, just yesterday, Microsoft invited developers to attend two Semantic Kernel sessions at Build 2023, set to kick off next Tuesday, May 23. One session is available in-person and online, while the other is in-person only.

"We will be presenting at BUILD, where you can learn more about how Semantic Kernel can help you increase productivity of yourself and your team," said Microsoft's Evan Chaki. "Whether you are attending the conference in person or online, you won't want to miss these sessions!"

Here's how Chaki described the two sessions:

-

Session 1: Building AI solutions with Semantic Kernel

"In this session, you will learn why we created Semantic Kernel (SK) and how it requires a new kind of developer mindset. Discover how SK has evolved alongside OpenAI's GPT-4 trajectory and what plugins will mean. We also discuss early signals we have gathered around SK use for copilots.

"This session is available for both in-person and online attendees and begins on Tuesday, May 23rd at 5:15pm Pacific Daylight Time."

- Session 2: Building an AI Copilot with Semantic Kernel in the GPT-4 era

"In this session, you will learn about Microsoft's emerging paved path for adding AI features with Open AI or Azure OpenAI Service. Explore the architecture of SK and its particular role in the entire AI production toolchain. Gain recent insights from SK hackathons and common use cases for LLM AI in practice.

"This session is only available for in-person attendees. We would like to see you in person on Wednesday, May 24th at 11:00am Pacific Daylight Time if you can attend."

The two sessions join many others related to AI, as Microsoft has become the leader in AI among the cloud giants (and maybe all other rival companies) thanks to a massive, $10 billion-plus investment in OpenAI. Borrowing the moniker "Copilot" from the GitHub Copilot "AI pair programmer" tool (Microsoft owns GitHub), the company has infused AI tech across a broad swath of its products and services.

Thus Copilot-related sessions at Build 2023 abound (see the article, "Copilot Tech Shines at Build 2023 As Microsoft Morphs into an AI Company").

Stay tuned for more news about AI, Copilot, Semantic Kernel and more at Build 2023.

About the Author

David Ramel is an editor and writer at Converge 360.