The Data Science Lab

Simple Numerical Optimization Using an Evolutionary Algorithm with C#

Dr. James McCaffrey of Microsoft Research says that when quantum computing becomes generally available, evolutionary algorithms for training huge neural networks could become a very important and common technique.

The goal of a numerical optimization problem is to find a vector of values that minimizes some cost function. The most fundamental example is minimizing the Sphere Function f(x0, x1, .. xn) = x0^2 + x1^2 + .. + xn^2. The optimal solution is X = (0, 0, .. 0) when f(X) = 0.0.

An evolutionary algorithm loosely mimics biological crossover (combining two existing parent solutions to create a new child solution) and mutation (slightly modifying a solution in a random way). In high-level pseudo-code:

create population of random solutions

loop many times

pick two parent solutions

create a child solution

mutate child

evaluate child

replace weak solution in pop with child

end-loop

return best solution found

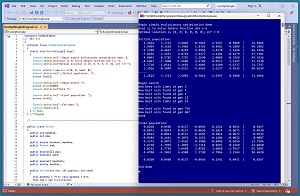

A good way to see where this article is headed is to take a look at the screenshot of a demo program in Figure 1. The demo program finds a solution for the Sphere Function with dim = 6. The demo sets up a population of eight random solutions. After 1,000 iterations of crossover and mutation, the demo returns a good, but not optimal, solution of (0.02, 0.05, 0.01, -0.01, 0.15, 0.04) with error = 0.0297.

[Click on image for larger view.] Figure 1: Simple Evolutionary Algorithm in Action

[Click on image for larger view.] Figure 1: Simple Evolutionary Algorithm in Action

This article assumes you have intermediate or better programming skill but doesn't assume you know anything about evolutionary algorithms. The demo is implemented using C# but you should have no trouble refactoring the code to a different C-family language if you wish.

The complete source code for the demo program is presented in this article. The code is also available online.

Overall Program Structure

I used Visual Studio 2022 (Community Free Edition) for the demo program. I created a new C# console application and checked the "Place solution and project in the same directory" option. I specified .NET version 6.0. I named the project EvoOptSimple. I checked the "Do not use top-level statements" option to avoid the confusing program entry point shortcut syntax.

The demo has no significant .NET dependencies, and any relatively recent version of Visual Studio with .NET (Core) or the older .NET Framework should work fine. You can also use the Visual Studio Code program if you like.

After the template code loaded into the editor, I right-clicked on file Program.cs in the Solution Explorer window and renamed the file to the more descriptive EvoOptSimpleProgram.cs. I allowed Visual Studio to automatically rename class Program to EvoOPtSimpleProgram.

The overall program structure is presented in Listing 1. The heart of the demo program is a Solver class with a Solve() method.

Listing 1: Overall Program Structure

using System;

namespace EvoOptSimple

{

internal class EvoOptSimpleProgram

{

static void Main(string[] args)

{

Console.WriteLine("Begin simple evo optimization demo ");

Console.WriteLine("Goal is to solve Sphere with dim = 6 ");

Console.WriteLine("Opt soln is [0,0,0,0,0,0], err = 0 ");

Solver solver = new Solver(6, 8, seed: 0);

Console.WriteLine("Initial population: ");

solver.Show();

Console.WriteLine("Begin search ");

solver.Solve(1000);

Console.WriteLine("Done ");

Console.WriteLine("Final population: ");

solver.Show();

Console.WriteLine("End demo ");

Console.ReadLine();

} // Main

} // Program

public class Solver

{

// class member fields here

public Solver(int dim, int popSize, int seed) { . . }

public double ComputeError(double[] soln) { . . }

public void Show() { . . }

public int[] PickParents() { . . }

public double[] CrossOver(double[] parent1,

double[] parent2) { . . }

public void Mutate(double[] soln) { . . }

public void Solve(int maxGen) { . . }

}

} // ns

In the Main() method, the Solver object is instantiated for a population of eight solutions, each of which is a vector with six values. The population size value is a hyperparameter that must be determined by experimentation.

The Solve() method performs the evolutionary algorithm using maxGen = 1,000 generations. The value of maxGen is a hyperparameter.

The Solver class contains methods Solve(), ComputeError(), Show(), PickParents(), CrossOver() and Mutate(). The methods are declared with public scope to make it easier to refactor the C# demo code to a language that doesn't support private scope.

The Solver Class

The Solver class has eight class members, one constructor and six methods. The code is presented in Listing 2. The dim member represented the number of values in a solution. The minGene and maxGene store the smallest and largest possible values in a solution. The demo hard-codes these values to -5.0 and +5.0, but you could pass these values as parameters to the Solver constructor.

Listing 2: The Solver Class

public class Solver

{

public int popSize;

public int dim;

public double minGene, maxGene;

public Random rnd;

public double[][] pop;

public double[] errs;

public double[] bestSoln;

public double bestErr;

public Solver(int dim, int popSize, int seed)

{

this.minGene = -5.0; this.maxGene = 5.0;

this.rnd = new Random(seed);

this.dim = dim;

this.popSize = popSize;

this.pop = new double[popSize][];

for (int i = 0; i < popSize; ++i)

this.pop[i] = new double[dim];

this.errs = new double[popSize];

for (int i = 0; i < popSize; ++i)

{

for (int j = 0; j < dim; ++j)

this.pop[i][j] = (this.maxGene - this.minGene) *

this.rnd.NextDouble() + this.minGene;

this.errs[i] = this.ComputeError(this.pop[i]);

}

Array.Sort(this.errs, this.pop); // parallel sort

this.bestSoln = new double[dim];

for (int j = 0; j < dim; ++j)

this.bestSoln[j] = this.pop[0][j];

this.bestErr = this.errs[0];

} // ctor()

// other Solver methods here

}

Because evolutionary algorithms use random operations for crossover and mutation, the Solver class uses a Random object member rather than relying on a global Random object. The pop (population) member is an array of arrays, for example, pop[2][3] is the value of solution [2] at index [3].

The errs member is a vector that holds the error for each solution in the population. For example, if pop[2] = (0, 0, 0, 3, 0, 0) then errs[2] holds 0^2 + . . + 3^2 + . . 0^2 = 9.0. For simplicity, the design of the Solver class uses parallel arrays for the population of solutions and the associated errors. An alternative design is to create a program-defined class object that holds a vector/solution and its associated error.

The bestSoln member is a vector that holds the best solution found at any point by the Solve() method. The bestErr vaue is the associated best (lowest) error.

The Solver constructor allocates memory for the population and errors vector, and then gives each solution in the population random values between minGene and maxGene. The error of each initial random solution in the population is computed by a ComputeError() method.

After the initial values of the population and errors vectors have been assigned, the constructor sorts both in parallel, from smallest error to largest, using the built-in Array.Sort() method. After sorting, the best solution is at pop[0] and its error is at errs[0]. These values are copied to the bestSoln and bestErr members.

Crossover

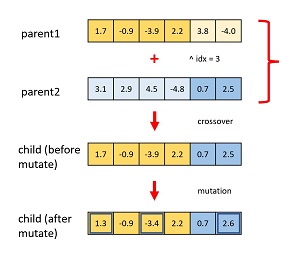

The crossover and mutate operations are illustrated in Figure 2. The crossover mechanism combines two parent solutions to create a new, hopefully better, child solution. The first step is to pick two parent indices from the existing population.

[Click on image for larger view.] Figure 2: Crossover and Mutation

[Click on image for larger view.] Figure 2: Crossover and Mutation

The demo program uses the simple approach of picking one parent index from the top/best half of the population and the other parent index from the other half of the population:

public int[] PickParents()

{

int first = this.rnd.Next(0, this.popSize / 2);

int second = this.rnd.Next(this.popSize / 2, this.popSize);

int flip = this.rnd.Next(0, 2); // 0 or 1

if (flip == 0)

return new int[] { first, second };

else

return new int[] { second, first };

}

The two index values are returned in an array of type int. The first and second index values are randomly assigned to either result[0] or result[1] so that parent1 is sometimes at [0] and sometimes at [1].

A crossover point is randomly selected. The child is created using the left part of the first parent and the right part of the second parent. The implementation is:

public double[] CrossOver(double[] parent1,

double[] parent2)

{

int idx = this.rnd.Next(1, this.dim - 1);

double[] child = new double[this.dim];

for (int k = 0; k < idx; ++k)

child[k] = parent1[k];

for (int k = idx; k < this.dim; ++k)

child[k] = parent2[k];

return child;

}

There are dozens of more complicated ways to implement crossover, but the simple approach used in the demo is usually effective.

Mutation

The demo program mutates a newly created child solution using this code:

public void Mutate(double[] soln)

{

double lo = -0.25; double hi = 0.25;

for (int j = 0; j < soln.Length; ++j) {

int flip = this.rnd.Next(0, 2); // 0 or 1

if (flip == 1) {

double delta = (hi - lo) * this.rnd.NextDouble() + lo;

soln[j] += delta;

}

}

}

The Mutate() method walks through each value in a child solution and flips a virtual coin. If the coin is heads, the child value is left alone. If the coin is tails, the child value is incremented or decremented by a random value between -0.25 and +0.25. These limits are hyperparameters that must be determined by experimentation.

The Solve() Method

The heart of the demo program is the Solve() method. The implementation begins with:

public void Solve(int maxGen)

{

for (int gen = 0; gen < maxGen; ++gen) {

// 1. make a child

int[] parentIdxs = this.PickParents();

double[] parent1 = this.pop[parentIdxs[0]];

double[] parent2 = this.pop[parentIdxs[1]];

double[] child = this.CrossOver(parent1, parent2);

. . .

The PickParents() method returns indices of two solutions in the population in an int array. Those two indices are used to reference two parent solutions, which are then used to create a child solution.

Next, the newly created child solution is mutated and the mutated child is evaluated:

// 2. mutate and evaluate

this.Mutate(child);

double childErr = this.ComputeError(child);

At this point, the Solve() method checks to see if the newly created child is a new best solution:

if (childErr < this.bestErr) {

Console.WriteLine("New best soln found at gen " + gen);

for (int i = 0; i < child.Length; ++i)

this.bestSoln[i] = child[i];

this.bestErr = childErr;

}

Next, the child is used to replace a weak existing solution:

// 3. replace a weak soln with child

int idx = this.rnd.Next(this.popSize / 2, this.popSize);

for (int j = 0; j < this.dim; ++j)

this.pop[idx][j] = child[j];

this.errs[idx] = childErr;

One of the solutions in the bottom half (worse) of the population is replaced by the new child. The demo overwrites the values of the selected weak solution. An alternative is to replace by reference.

The demo uses an optional mechanism called immigration. A new random solution (immigrant) is created and then is used to replace a randomly selected weak solution. The immigrant is created using the same code as an initial solution:

// 4. create immigrant

double[] imm = new double[this.dim];

for (int j = 0; j < this.dim; ++j)

imm[j] = (this.maxGene - this.minGene) *

this.rnd.NextDouble() + this.minGene;

double immErr = this.ComputeError(imm);

The new immigrant must be checked to see if it produced a new best solution by chance:

if (immErr < this.bestErr) {

Console.WriteLine("New best soln (imm) at gen " + gen);

for (int i = 0; i < child.Length; ++i)

this.bestSoln[i] = child[i];

this.bestErr = childErr;

}

The immigrant replaces an existing solution in the bottom half of the population:

idx = this.rnd.Next(this.popSize / 2, this.popSize);

this.pop[idx] = imm;

this.errs[idx] = immErr;

After the new child and new immigrant solutions have been placed into the population, the population and errors are sorted in parallel so that the solutions are ordered from best to worst for the next generation:

. . .

Array.Sort(this.errs, this.pop);

} // each gen

} // Solve() method

} // Solver class

Notice that creating an immigrant and placing it in the population could possibly overwrite the newly created child solution. In most scenarios this isn't problem but you can add code to prevent this from happening.

Computing Error

The demo defines a ComputeError() method to compute the error/cost of a solution vector. For the demo program, the error is just the value of the Sphere Function, which is the sum of the squared values in a solution:

public double ComputeError(double[] soln)

{

double result = 0.0;

for (int j = 0; j < soln.Length; ++j)

result += soln[j] * soln[j];

return result;

}

When using an evolutionary algorithm you need to define a method for the specific problem being optimized. In some situations you might need to pass additional information to the Solver class. For example, if the problem is to design an aircraft wing that minimizes drag, you'd need to pass all the relevant information needed to construct a virtual wing and determine its drag.

In research, the term cost function is usually preferred to error function, but in informal communication the terms error, cost and loss are often used interchangeably.

The Solver class contains a Show() method that displays the solutions in the population and the associated error of each solution. The implementation is presented in Listing 3.

Listing 3: The Show() Method

public void Show()

{

for (int i = 0; i < this.popSize; ++i) {

for (int j = 0; j < this.dim; ++j) {

Console.Write(this.pop[i][j].ToString("F4")

.PadLeft(9) + " ");

}

Console.WriteLine(" | " + this.errs[i].ToString("F4")

.PadLeft(10));

}

Console.WriteLine("-----");

for (int j = 0; j < this.dim; ++j)

Console.Write(this.bestSoln[j].ToString("F4").

PadLeft(9) + " ");

Console.WriteLine(" | " + this.bestErr.ToString("F4")

.PadLeft(10));

} // Show

The Solve() method doesn't return a value and so the best solution is determined by examining the output of the Show() method. An alternative design is for Solve() to return the best solution found.

Wrapping Up

Evolutionary algorithms aren't used very often. For example, you can think of training a deep neural network as a numerical optimization problem where the goal is to find values for the network's weights that minimize the error between computed output values and known correct target values from training data. Because a standard neural network is essentially a math function that is differentiable (in the sense of mathematical calculus), neural networks can be trained using a standard calculus minimization technique called backpropagation.

However, researchers continue to explore evolutionary algorithms to train deep neural networks. Evolutionary algorithms can be used for neural networks that have unusual architectures that aren't differentiable. There are also some research results that suggest evolutionary algorithms can be useful when working with huge neural networks with billons of weights, such as networks used for natural language processing.

In general, evolutionary algorithms are much slower than calculus-based optimizations techniques. But there is some speculation that when quantum computing becomes generally available, evolutionary algorithms for training huge neural networks could become a very important and common technique.